User Characteristics and Behaviour in Operating Annoying Electronic Products

Chajoong Kim

School of Design and Human Engineering, Ulsan National Institute of Science and Technology, Ulsan, South Korea

Despite the enormous progress in technology and design over the last decades, consumer dissatisfaction is increasing, mainly because of soft usability problems: problems that have nothing to do with technical failure. In earlier studies, types of soft usability problems have been influenced on the one hand by product properties and on the other hand by user characteristics. These studies are all based on retrospective data. However, common practice in the manufacturing industry is to test their prototypes through user trials, which means testing products in actual use. Therefore, this paper discusses an experiment investigating the effects of the relationship between product properties and user characteristics by way of a user trial with two products whose usability is known to be problematic. Overall, 84 participants, between the ages of 20 to 74, participated in this study. The experiment was conducted in the USA, South Korea and the Netherlands. In this way we were able to compare this actual use situation with our previous retrospective studies in relation to different cultures. The study concludes that there are differences in soft usability problems between actual use and retrospective evaluation. The kind of soft usability problems experienced is partly dependent on both user characteristics and product properties. The role of users’ expectations as well as their follow-up behaviour in relation to soft usability problems is discussed.

Keywords – Usability, User Characteristics, User Behaviour, Culture

Relevance to Design Practice – An experiment was conducted to establish the relationship between soft usability problems and user characteristics in actual use. The results provide a better understanding of the interaction between target user, product type, and soft usability problems in the product development process.

Citation: Kim, C., (2014). User characteristics and behavior in operating annoying electronic products. International Journal of Design, 8(1), 93-108.

Received May 11, 2012; Accepted September 22, 2013; Published April 30, 2014.

Copyright: © 2014 Kim. Copyright for this article is retained by the author, with first publication rights granted to the International Journal of Design. All journal content, except where otherwise noted, is licensed under a Creative Commons Attribution-NonCommercial-NoDerivs 2.5 License. By virtue of their appearance in this open-access journal, articles are free to use, with proper attribution, in educational and other non-commercial settings.

*Corresponding Author: cjkim@unist.ac.kr

Chajoong Kim earned his PhD in Design for Usability from the faculty of Industrial Design Engineering, Delft University of Technology, and holds an MSc in Design for Interaction from the same university. He is an Assistant Professor at the School of Design and Human Engineering, Ulsan National Institute of Science and Technology, South Korea. He worked for the Design for Usability project since 2007, within which his focus was on how user characteristics are related to usability experience. His main research interests include user diversity, cultural differences and cognitive aspects in product experience. He has authored several books related to design for usability and soft usability problems with our everyday products, and has published in journals, such as Journal of Design Research.

Introduction

The consumer electronic market is in a rapid evolution phase, and manufacturers are under tremendous competitive pressure to be first to market. This competition does not always end up with consumer satisfaction due to the fact that consumers’ expectations on product quality and usability deviate from their experiences with those products, and users differ in terms of preferred product properties (Broadbridge & Marshall, 1995; Goodman, Ward, & Broetzmann, 2002; Norman, 2004). And, unfortunately, hurriedness in launching a product (often) makes it even worse by hindering manufacturers from taking into account the various aspects of users’ experiences in their product development process. In addition, the concept and form of consumer electronic products have changed over the years thanks to advances in science and technology. Take for instance the Walkman, popular in the 1980’s, that has been replaced by the digital audio player, which is much more compact and has more functions. However, this advancement has not necessarily resulted in positive user experience. According to recent studies, consumer dissatisfaction is increasingly caused by ‘soft usability’ problems people experience (den Ouden, Yuan, Sonnemans, & Brombacher , 2006; Kim, Christiaans, & van Eijk, 2007; Söderholm, 2007; Thiruvenkadam, Brombacher, Lu, & den Ouden , 2008). As opposed to hardware-related problems, soft usability problems have almost nothing to do with technical failure (also called no-fault-found or NFF). Typical ‘hard’ problems with for instance a washing machine might be that the function to supply water to the machine does not supply water or that the machine does not start after pushing the ‘on’ button. Both of these problems are a consequence of technical hardware failures. On the contrary, a ‘soft’ problem with a washing machine might be that the user does not understand how to program it, but the program works fine if the user is able to follow the right procedure.

As earlier studies have shown, soft usability problems are influenced by product characteristics such as functional complexity and lack of structural elements (Christiaans & Kim, 2009; Donoghue & de Klerk, 2006; Kim & Christiaans, 2012; Kim et al., 2007; Law, Roto, Hassenzahl, Vermeeren, & Kort, 2009). However, dissatisfaction with products is not only a consequence of poor quality or any deficiency, but is often also a mismatch between product properties, user characteristics, and context. With knowledge about this interaction between user characteristics, product properties, and context or about any mismatch between them, a product development team can already identify expected soft usability problems at the beginning of the project.

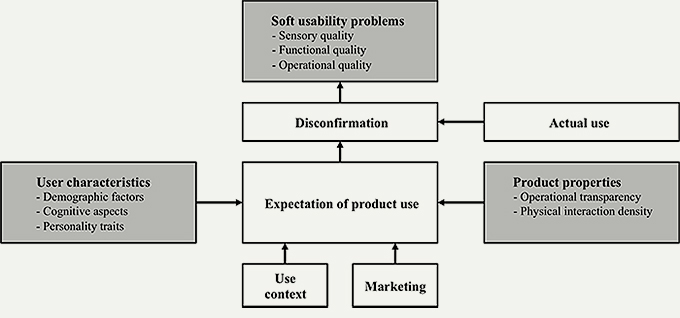

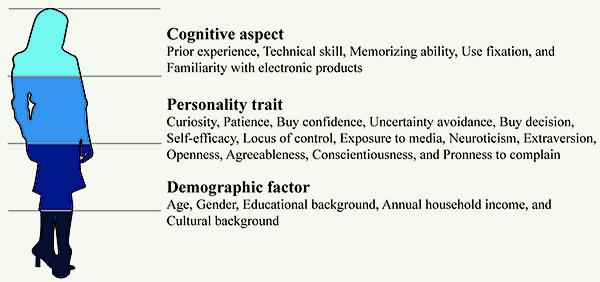

However, hitherto studies are still not sufficient to explain this interaction because of a lack of both a theoretical foundation and empirical evidence. In order to get a complete overview of all aspects involved in this interaction a conceptual framework was developed based on a conceptual framework related to consumer dissatisfaction (Donoghue & de Klerk, 2006) and is presented in Figure 1. As a result of the interaction between user characteristics, product properties, use context, and marketing, a consumer will form certain expectations of the specific product and its use. However, the initial expectations of a consumer might be disconfirmed by the actual use of the product.

Figure 1. Conceptual framework of the study (based on Donoghue & de Klerk, 2006).

Hence, the research question in our first studies was to map the different factors involved in the formation of soft usability problems. We started with two survey studies in which people were asked to specify which of their electronic household products had been most annoying, and why, even though they worked technically well. Together with this question, the survey list also asked for demographic and personality factors of the respondents. Surprisingly, many non-technical problems were mentioned about a broad range of products.

On the basis of the survey results, the soft usability problems were divided into the following six categories: lack of product understanding, poor performance, sensory dissatisfaction, lack of structure, maintenance problems, and constraint usability (Kim et al., 2007). In the same study there were indications that cognitive load in operating the product and physical interaction density in use might explain some of the problems. Cognitive load was defined in terms of functional complexity expressed in the number of functions, the transparency (black box character), and feedback. Interaction density was defined as the frequency and duration of physical interactions with the product while using.

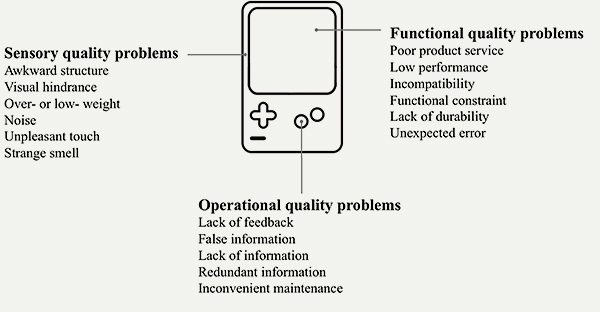

In order to establish a connection with existing product quality theories, the six soft problem categories were clustered into three product quality categories: sensory, functional, and operational quality (Dantas, 2011; Madureira, 1991). In this way, soft usability problems could be structurally better understood in terms of design principles. Although the three qualities described below are for ‘soft’ problems, it will be clear that some of these qualities are built upon hardware properties.

Sensory quality is related to sensory perception. By means of our perceptive faculties, assessments through the senses are made of the structure, the visibility, the weight, the sound, the texture, and the smell of a product. The responses to this quality are usually immediate and momentary, and lead to pleasant or unpleasant experiences according to the different senses. User dissatisfaction with this quality is related to awkward product structure, visual hindrance, weight (too high or too low), noise, irritating touch, and unpleasant smell.

Functional quality is related to how well the instrumental aim of a product is achieved. This quality is evaluated through the results obtained in using the product, and is a quality often experienced after repetitive use on a long-term basis. Lack of this quality can be caused by both hard and soft problems, but in this paper we concentrate on the latter problems. Soft problems related to this quality mainly result from the technological limitations of products: for instance, functional constraints such as lack of functions, incompatibility with other equipment, low performance in terms of for example slow reaction and short battery sustainability, irregular unexpected errors, and frequent breakdown. They are also related to poor product service.

Operational quality is related to the user’s cognitive efforts and care in operating a product. Problems related to this quality have to do with ease of use, complexity in operating the product, and the need for maintenance; for example, difficulty in understanding the functions, confusing navigation, requiring too much care, and inconvenient maintenance. Any shortcoming in operational quality often results from lack of information and feedback.

These three product quality categories also encompass the root causes of soft usability problems as determined in our previous study (Kim et al., 2007). Therefore, the framework below was used to code the most annoying problems participants experienced (see Figure 2).

Figure 2. Three categories of soft usability problems.

In the two survey studies, all three categories of the soft usability problems were approximately evenly distributed: in the first study 25% sensory, 41% functional, and 34% operational problems were found (Kim et al., 2007); and in the second study 33% sensory, 32% functional, and 35% operational problems were found (Kim & Christiaans, 2012). Moreover, it was found that the kind of problems was partly dependent on and/or showed interaction with user characteristics such as age and culture (Christiaans & Kim, 2009; Donoghue & de Klerk, 2006; Kim & Christiaans, 2012; Kim et al., 2007; Law et al., 2009). For instance, our study showed that young people were more likely to mention problems related to functional quality, and older people tended to stress operational problems. Regarding complaints based on cultural differences, when comparing Dutch and South Korean people, Koreans complained more about sensory quality than Dutch people.

However, a common practice in the manufacturing industry is to test their prototypes through user trials, in which they try to collect insight information about their new products in actual use. The think aloud method and focus group method are common practices in the industry, but retrospective data in combination with actual use have seldom been used. When people are confronted with real-time operation problems, it is interesting to observe whether these problems experienced are expressed according to the same (lack of) product qualities as in our survey studies, and whether the same interaction with user characteristics was active. For this reason an experiment was set up with people from three countries studying the actual operation of electronic products, and used a different approach compared to the previous survey studies. Hence, this study aims at investigating how soft usability problems are related to product properties, user characteristics and expectations in the same way as was found in the previous survey studies. User characteristics in this study included demographic factors such as cultural background, cognitive aspects, and personality traits as well as consumers’ expectations.

Method

Because this is an exploratory study, it is characterized by a mixed methods approach. The emphasis is on a quantitative analysis, which means that we are not looking for high external validity but rather aiming at gathering introspective data that can be compared with previous retrospective data. The experiment was carried out in three countries: the USA, South Korea, and the Netherlands. These three countries were chosen because of their different cultural backgrounds. Using criteria based on Hofstede’s cultural dimensions, the United States was characterized by its individuality, masculinity, and short-term orientated culture; South Korea by its collectivism, high uncertainty avoidance, and long-term orientation; and the Netherlands by its horizontal hierarchy, femininity and risk-taking (Hofstede, 2003). The focus was on how different each individual is with regard to actual product use.

Participants

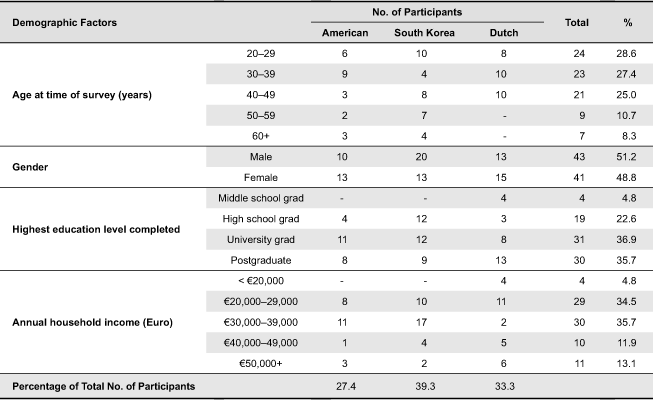

Participants in the experiment included: 23 people from the USA, 33 from South Korea, and 28 from the Netherlands, and all lived in their respective home country at the time of the experiment. They were recruited via advertising, and selected to provide a balance across gender and age groups. Detailed demographic characteristics of the participants are shown in Table 1.

Table 1. Demographic characteristics of the sample (N = 84).

Instruments and Measures

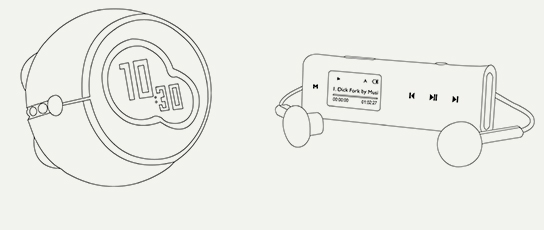

In order to create an experimental situation two electronic consumer products were selected: an alarm clock and an MP3 player (Figure 3). Both products were known to have many consumer complaints in product reviews at Dutch online shops (the alarm clock) and at Global shopping sites such as Amazon (the MP3 player), all related to soft usability problems. The reason for taking two products instead of one was on the one hand to avoid bias caused by a particular type of product, and on the other hand to see the effect of product properties in relation to type of soft usability problems. The two products are different in terms of product properties, operational transparency, and physical interaction density, as defined above. The alarm clock had a few conventional functions including clock, alarm with four different alarm sounds, and FM radio (defined as ‘operationally transparent’), and physical interaction between the user and the product occurs only at the beginning of usage and the end of usage (defined as ‘low physical interaction density’). The selected MP3 player was highly functional but also very compact, and had various main functions such as music playing, FM radio, voice recording, and a USB memory stick, as well as many secondary functions such as shuffling songs, sound tone, and play mode (defined as ‘operationally opaque’), and physical interaction with the product is quite intensive during usage (defined as ‘high physical interaction density).

Figure 3. Alarm clock (left) and MP3 player (right).

The sessions were videotaped with consent of the participants. Observations were based on these videotapes, and included recordings of the comments made by the participants during task operation, time taken for the subsequent tasks and task completion, and use of the manuals for each of the products. In the final interviews with the participants, these data were used to stimulate verbalizations of their experiences with the tasks.

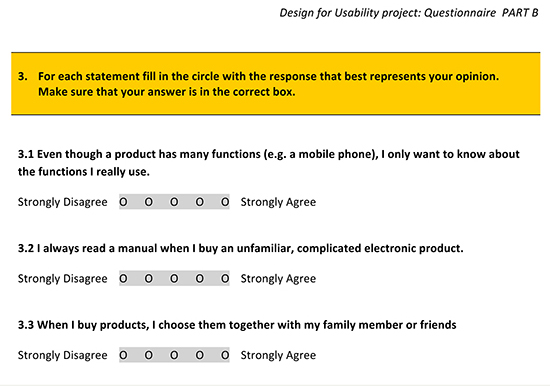

Based on existing tests, we developed a questionnaire to measure user characteristics such as demographic factors, personality traits, and cognitive aspects (see Figure 4 and 5).

Figure 4. User characteristics measured.

Figure 5. An example of the questionnaire part B..

For most questions a five-points scale was used, while some questions were dichotomous (requiring a yes or no answer) or multiple choice. Questions measuring cognition (e.g., memorizing ability) and personality (e.g., self-efficacy and locus of control) were adopted from free online cognition and psychology tests. The NEO Five-Factor Inventory (NEO-FFI)(McCrae & Costa Jr, 2004) was used to assess agreeableness, conscientiousness, extraversion, neuroticism, and openness. In the analysis, a higher value on a variable usually means ‘more of that characteristic.’ Exceptions are the nominal variables gender and culture, while a higher value for age, education, and household income has the meaning of ‘more of that characteristic.’

Follow-up (re)actions in relation to soft usability problems were measured via retrospective interviews after product trials.

Procedure

The American and South Korean participants were invited to participate in the experiment at any location where they felt convenient, such as their home, a cafeteria or a library meeting room. The Dutch participants were invited to the Product Evaluation Laboratory at the university where the researchers work (Figure 6).

Figure 6. Sample pictures of the experiment in the United States, South Korea, and the Netherlands respectively.

First, the aim of the experiment was made clear to the participants by one of the researchers using a pre-determined script. This was then followed by requesting the participants to fill out the first part of the questionnaire. In order to prevent the participants from becoming bored, and thus losing concentration, the questionnaire was divided into two parts - Part A and Part B. In addition, privacy-sensitive questions, such as household income and personality, were asked in the second part. Part A covered information about cognitive aspects, and Part B dealt with general information about the participant and her/his personality traits.

Next, the participants were asked what expectations they had about the alarm clock or the MP3 player before using them. To minimize any ordering effect, the order of the alarm clock and MP3 player trials was alternated between users. The questions in this session were:

- Have you used an alarm clock (an MP3 player) before or are you using one at home?

- What expectations would you have about an alarm clock (an MP3 player) if you needed to replace it with a new one?

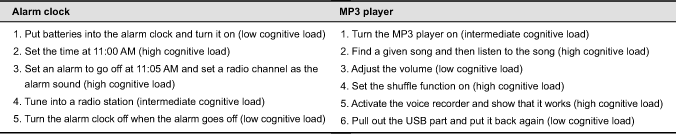

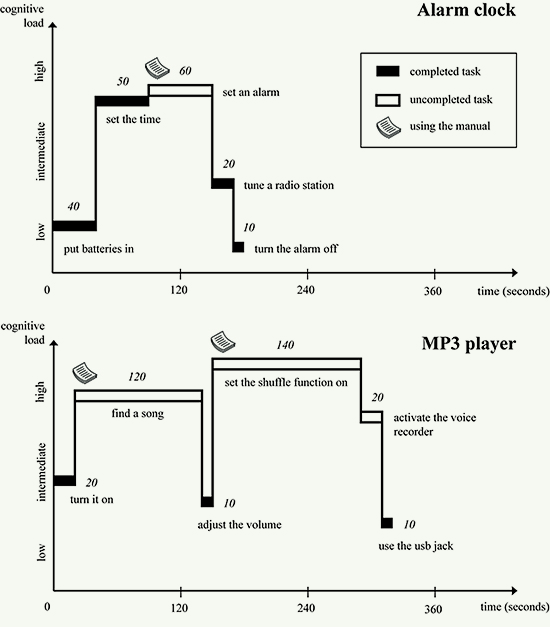

Then the participants were given several common tasks to be performed on the alarm clock (or the MP3 player), which aimed at letting them experience it at different levels of cognitive load, ranging from simple to complicated tasks. The participants were allowed to use the instruction manual for both products. All sessions were videotaped. The participants verbalized their thoughts (concurrent think aloud protocol) while they performed the operation tasks. These tasks are presented in Table 2.

Table 2. Instructions for the tasks to be performed with the alarm clock and the MP3 player.

The operation tasks in the experiment were divided into three levels based on cognitive load demand: tasks requiring low cognitive load, tasks requiring intermediate cognitive load, and tasks requiring high cognitive load. These terms refer to cognitive load generally required for the individual to complete a task. For example, high cognitive load tasks (such as ‘set the shuffle function on’) require high cognitive thinking and problem-solving, while low cognitive load tasks (such as ‘put the batteries in’) refer to smooth execution without demanding high cognitive thinking and problem-solving. Tasks requiring intermediate cognitive load include an intermediate level of cognitive thinking. See Table 2 for some more examples.

After completing the tasks, the participants filled out the last part of the questionnaire. Next, the participants were asked to operate the MP3 player (or the alarm clock) following the same procedure. Finally a retrospective interview was conducted with the participants to find out their overall experience. During the interviews, observation data from all the videotaped sessions of the participant, such as time taken, task completion rate, and use of the product instruction manual, were used to remind the participant of their use experience and stimulate their evaluation with regard to soft usability problems. Immediately after completing the tasks, each participant was asked to mention which, among the many problems they experienced, had been the most annoying and whether that one problem would lead them to returning the product. The problems were classified into one of the three aforementioned product quality categories. Finally, errors and particular behaviour of the participants, which occurred during product interactions, were discussed.

Coding Real-time Soft Usability Problems

Since the experiment aimed to let the participants experience any real-time soft usability problems, the coding of these problems were based on the data derived from the previous retrospective survey, i.e., use was made of the aforementioned three product quality categories of soft usability problems: sensory, functional and operational quality. The participants were also asked to mention the most annoying problem with the products. The authors and two scientists from the same faculty independently ‘scored’ all the problems into one of the three categories. The aspects in Figure 2 covered all the problems, and an independent encoder first sorted them into one of the aspects specified in the figure, and then they were summarized into the three categories. Inter-rater reliability was high (Cohen’s Kappa = .85). Some of the problems were difficult to categorize because they were related to a combination of two qualities. In such a case, the cause of the problem had priority over the outcome of the problem. For example, a difficulty in pressing the buttons of the alarm clock due to its tiny buttons was categorized as a sensory quality even though the small size of buttons also led to a problem in operating the product.

Results

The results are based on a combination of three measures: observations made during the experimental sessions, the retrospective interviews at the end, and the questionnaire. Because of the relationships in the data from these measures, the presentation of the results will follow the logic of the content.

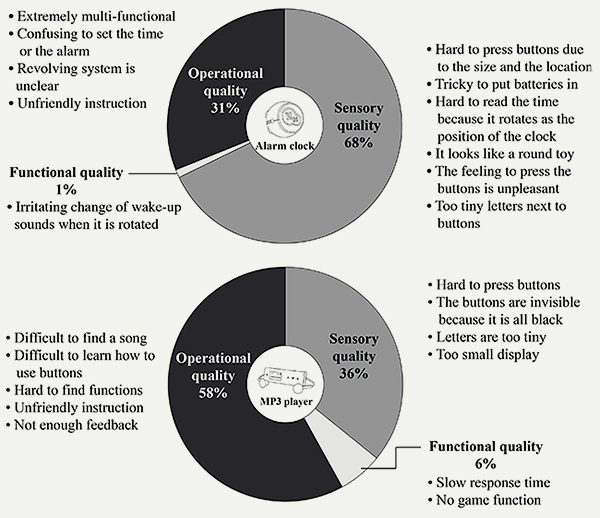

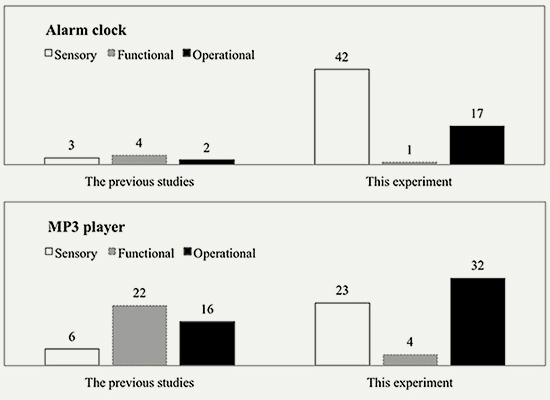

Soft Usability Problem Categoriesand Product Property

Data collected about the problems experienced by users with the two products were derived from the retrospective interviews. The examples of soft usability problems and percentage distribution of the problems grouped into the three product quality categories for the alarm clock and the MP3 player are shown in Figure 7. There is a significant difference between the two products in problems experienced [χ2(1, N = 84) = 13.93, p < .001]. With the alarm clock, the participants mainly complained about the sensory quality such as unpleasant sound or ugly shape, followed by problems with operational quality such as confusion about setting an alarm. In the experiment with the MP3 player, the most reported problems were related to operational quality such as difficulty in finding functions, followed by sensory quality problems such as buttons that were hardly visible. There were very few problems regarding the functional quality of the two products.

Figure 7. Examples of soft usability problems and percentage distribution of the problems grouped into the three product quality categories for the alarm clock (top graph) and the MP3 player (bottom graph).

Soft Usability Problems and User Characteristics

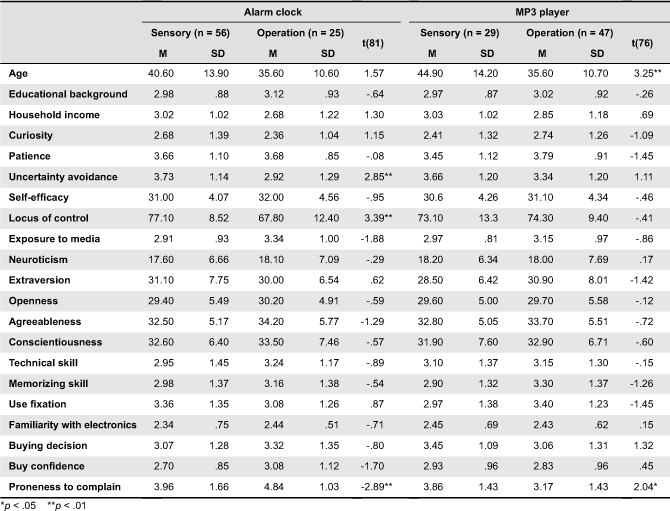

Which user characteristics are related to the occurrence of each soft usability problem and in which way do they interact with the perceived product qualities? In Table 3 and 4, means and standard deviations of user characteristics are presented on two of the three qualities: sensory and operational. Because of the very few problems reported regarding functional quality, this quality is not included in the tables. In order to test the significance of the relationships, a t-test was used for the continuous variables (Table 3), and a chi-square test was performed for the dichotomous variables (Table 4). For the alarm clock, significant variables include uncertainty avoidance, locus of control, proneness to complain, and culture. For the MP3 player, significant variables include age (the older the participant the lower the tendency for them to mention operational problems), proneness to complain (the higher the figure the more sensory problems there were), and prior experience (the more prior experience the participant had the more operational problems they reported).

Table 3. Soft usability problems and related user characteristics (continuous variables) in alarm clock and MP3 player.

Table 4. Soft usability problems and related categorical variables in the alarm clock and MP3 player tested by χ2 in respectively a 2x2 and 2x3 table.

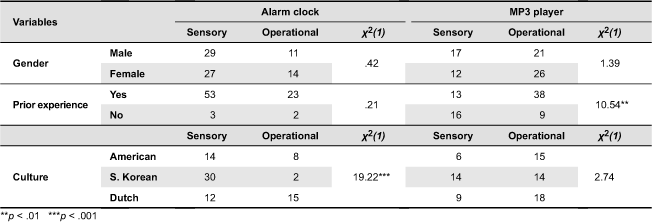

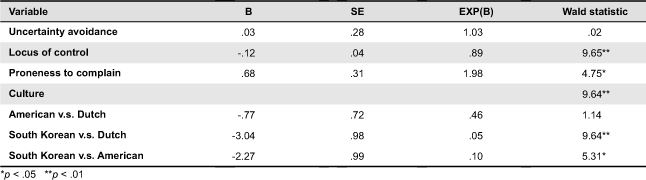

With these findings it is still not clear whether any of these variables have a real effect on the dependent variable (the type of problem) given the influence of the other variables. For this reason, only a binary logical regression can be used. However, considering the huge number of independent variables, only some of the more significant variables were selected for use in the analysis. See Tables 5 and 6 for the results.

Table 5. Summary of binary logistic regression analysis predicting soft usability problems of the alarm clock.

Table 6. Summary of binary logistic regression analysis predicting soft usability problems of the MP3 player.

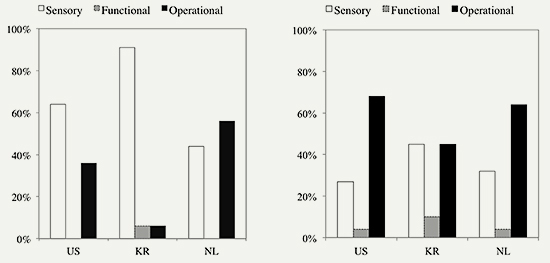

For the alarm clock, the Wald criterion demonstrates that locus of control, proneness to complain, and culture make a significant contribution to the prediction. The participants with strong internal locus of control tend to complain more about sensory quality. The average score on ‘Proneness to complain’ is higher among the participants who complained about operational quality than among those who complained about sensory quality. There are significant cultural differences in soft usability problems (See also Figure 8), and the experiment indicated that the Dutch and American participants were more likely to complain about operational quality than the South Koreans. Although uncertainty avoidance does not show statistical significance in the alarm clock, a Kruskal-Wallis test indicated that uncertainty avoidance is significantly affected by culture [H(2) = 12.98, p < .01], with the highest average score being calculated for the South Koreans (50.8), followed by the Americans (40.9) and the Dutch (29.4).

Figure 8. Percentage distribution of the soft usability problems grouped into the three product quality categories for the alarm clock (left chart) and the MP3 player (right chart) among three countries.

For the MP3 player, the Wald criterion demonstrates that only prior experience makes a significant contribution to the prediction. This indicates that people, who are experienced with an MP3 player, are more likely to complain about operational problems. No significant differences were found between the three countries regarding the MP3 player (See also Figure 8).

The participants who had more prior experience with MP3 players were significantly younger than those who had not, as was tested by the Mann-Whitney test [U = 150.50, p < .001, r = -.57]. From previous research, it is clear that younger people have more experience with portable multifunctional electronic products and will thus experience less operational problems with unfamiliar devices (Lawry, Popovic, & Blackler, 2009, 2010, 2011). Thus, we expected that younger participants would mention less of these problems than older participants. However, the results from Table 3 indicate that the younger participants were reporting more operational problems than the older participants.

A Chi-square analysis was performed to determine whether or not the participants who complained about either sensory or operational quality of the alarm clock also complained about the same quality problem when operating the MP3 player. The results indicate that there was no significant relation between the soft usability problems of the alarm clock and the MP3 player [χ2(1, N = 84) = 0.51, p = 0.48], with more than half of the participants (approximately 55% of the participants) mentioning different types of problems for the two products.

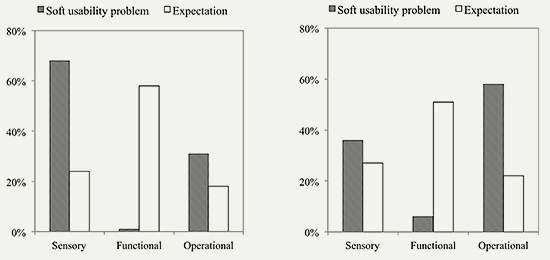

Soft Usability Problems and User Expectations

The participants’ expectations expressed before actual operation of the two products were categorized into the three categories: sensory quality, functional quality, and operational quality. The results of this study show that the expectations related to sensory quality of the two products were mainly about: big display, good looking, natural sounds, and lightweight. As for expectations regarding functional quality, the participants mainly mentioned: working well, multiple functions, long battery life, and large memory space. Expectations related to operational quality were mostly about ease of use. A comparison of the participants’ expectations before performing the operating tasks with the soft usability problems expressed by them after operating the products (Figure 9) shows that these expectations were formulated in a much more general way. For instance, the participants who expected ‘ease of use’ experienced ‘…confusion between setting the time and setting an alarm’ during use. In addition, there was little difference in user expectations between the alarm clock and the MP3 player (Figure 9). With both products, prior expectations related to functional quality far exceeded the other two qualities, interestingly so; nevertheless, hardly any functionality problems were reported in this experiment.

Figure 9. Soft usability problems and user expectations for the alarm clock (left chart) and the MP3 player (right chart).

User expectations differed between the three cultures, but, as mentioned before, most of the expectations regarding both products were about functional quality. However, with respect to the alarm clock, the South Korean participants were different in their expectations related to sensory quality, and regarded this quality as being as important as functional quality. See Figure 10 for the differences between the three countries.

Figure 10. User expectations of the alarm clock (left chart) and the MP3 player (right chart) among three countries.

Soft Usability Problems in Actual Use: Introspective vs. Retrospective Evaluation

The percentages of soft usability problems in this study are significantly different from those in the previous studies. While the results from the survey studies showed that the problems were almost equally divided over the three product quality dimensions, in this real-time experiment, the problems regarding functional quality were rarely mentioned. This can be partly explained by the fact that in the survey studies people complained about a wide range of products and not only about the two products used in the experiment of this study. However, the difference is still obvious if we only look at the problems about the alarm clock and the MP3 player expressed in the survey studies and compare those with the results from the experiment of this study, see Figure 11. Moreover, because we have no information about the brand and type of alarm clock and MP3 player that were complained about in the previous survey studies, thus a direct conclusion cannot be made. Nevertheless, the comparison here is just to demonstrate this difference in functionality problems mentioned.

Figure 11. Comparison of frequencies of soft usability problems expressed by participants for the alarm clock (upper) and the MP3 player (below) between the previous studies and this study.

Implications of Experienced Soft Usability Problems on Follow-up User Behaviour

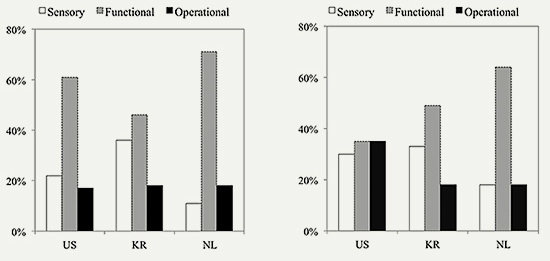

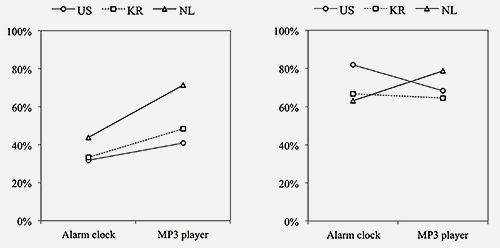

Previous studies (Kim & Christiaans, 2009; Kim et al., 2007) have shown that people are very irritated by soft usability problems. But what effect does it have on their behaviour afterwards? In the retrospective interviews carried out in this study, the participants were asked (a) whether they would return the product if they had bought it, and (b) whether they would like to buy this sort of product again in spite of the use problems. The results obtained indicated that experiencing soft usability problems would not necessarily lead to the participants returning the products (see Figure 12). 32% to 44% of the participants would return the alarm clock against 41% to 71% expressing they would return the MP3 player. Whether they would buy the same product again was negatively answered by 63% to 82% of the participants for the alarm clock and by 65% to 79% for the MP3 player. Cultural differences, as shown in Figure 12, were not significant.

Figure 12. Percentages of the participants expressing they “Would return the product” (left chart) and “Negative Purchase Intention” (right chart) per country.

Observations during Tests

All videotaped sessions were graphically illustrated in terms of time to complete a task, whether or not a task was completed, and whether they made use of the product manual (see examples in Figure 13). This provides an overview of the observation data of the participants working with the products. For instance, in one case of working with the alarm clock, one of the participants completed the task of putting batteries in 40 seconds, this being one example of low cognitive load. After this task, the participant took 50 seconds to set the time, which is an example of high cognitive load. Next, the participant tried to set the alarm following the instruction manual. However, after 60 seconds the participant gave up and did not complete this task. The other tasks followed.

Figure 13. Examples of operation task completion in terms of cognitive load and time spent.

The graphical illustrations of the results show that the participants were able to complete all operation tasks with both products that required low cognitive load. They spent much more time on operation tasks involving the MP3 player than with the alarm clock. They also spent much more time with tasks requiring high cognitive load, and did not necessarily lead to the completion of such tasks.

For a further analysis of the completion rate of tasks requiring high cognitive load and time taken on the tasks, a selection of these tasks was made: for the alarm clock, ‘set the time’ and ‘set an alarm’, and for the MP3 player, ‘find a song,’ ‘set the shuffle function on,’ and ‘activate the voice recorder.’ The average completion rate of these tasks was approximately 70% for the alarm clock and 40% for the MP3 player; the average time spent was 252 seconds for the alarm clock and 434 seconds for the MP3 player. The average task completion rate and time spent was highest and shortest respectively for the alarm clock because the tasks for the alarm clock require relatively less cognitive load compared to the MP3 player.

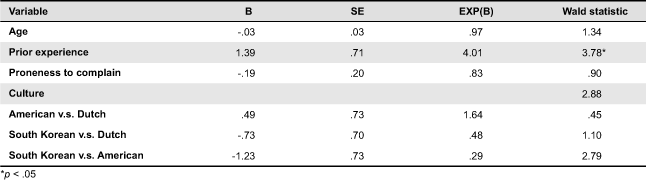

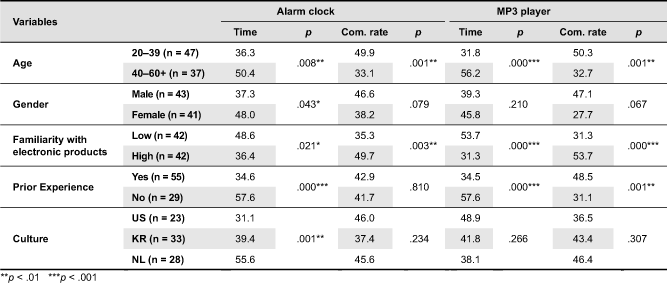

Task completion rate and time taken were also statistically analyzed to see how they were related to particular user characteristics such as age, gender, familiarity with electronic products, prior experience, and culture. See Table 7 for an overview.

Table 7. Results of a Mann-Whitney test between time spent on tasks, completion rate and user characteristics for the alarm clock and the MP3 player.

We asked the participants to think aloud while doing the given tasks, and this was further emphasized during the sessions. However, as expected, the participants had difficulty in expressing every step or decision in the process. Although some of the participants made comments every now and then, mainly based on frustration, however, the actual number of comments was too small to report on them.

The results outlined in Table 7 indicate that age, familiarity with electronic products, prior experience, and culture are related to task completion rate and time taken. From the results it can be seen that the older participants spent more time on the tasks and had a smaller completion rate. It can also be observed that the more familiarity with electronic products a participant had, the higher the task completion rate and the less the time spent. The same holds for prior experience with these types of products. However, gender only made a difference in time taken performing tasks for the alarm clock. Moreover, it was found that the female participants spent more time than the male participants. Finally, it can be seen that culture played a role in the time spent on the tasks with the alarm clock. No significant relationship was found between the aforementioned user characteristics and use of the product manual.

Task completion rate did not show any significant relationship between the intention to return the product or not to buy products of this brand in the future. Task completion rate, time taken, and use of the product manual had no relationship with sensory quality and operation quality problems.

Conclusions and Discussion

The experiment described in the study was conducted in order to achieve a deeper insight into usability experience of users in operating household electronics. The main goal of this study was to gain an understanding of how soft usability problems are influenced by ‘actual use’ as compared to previous survey studies (retrospective evaluation). Second, the study aimed to identify any relationships between expectations, soft usability problems, product properties, and the personal background of participants. Although the contribution of this study lies foremost in the emphasis on user diversity related to the occurrence of soft usability problems, however, a number of other conclusions can also be drawn.

Soft Usability Problem Categories and Product Property

In a previous study, the two product properties of operational transparency and physical interaction density was shown to influence the type of problems expressed (Kim & Christiaans, 2012). Problems regarding sensory quality were mainly observed in operationally transparent (low cognitive load required) and/or dense physical interaction products, and problems regarding operational quality were closely related to operational non-transparent (high cognitive load required) and/or low interaction density products. With the experiment described in this study, we would have expected the same results: with its high operational transparency, the alarm clock should be more likely to produce sensory problems, as well as operational problems because of its low physical interaction density. Likewise, with its low operational transparency and high physical interaction density, the MP3 player should be more likely to produce operational and sensory problems. However, for the alarm clock, usability problems were dominantly related to sensory quality, and usability problems with the MP3 player were dominantly related to operational quality. This provides more insight into the findings of the previous study regarding the interaction between product properties and soft usability problems. Operational transparency seems to be a more accurate predictor to anticipate soft usability problems of an electronic product than its physical interactivity. However, it does not mean that physical interaction density should be neglected. The percentage of operational problems with the MP3 player (36%) and of sensory problems with the alarm clock (31%) are still quite substantial.

Besides, we might take into account the role of an intervening variable, i.e., the like or dislike of a product. In a survey study related to (dis)pleasure conducted by Jordan (1998), an alarm clock was also used, and the study found that the alarm clock was always associated with annoyance because the buzz tone is irritating. This leads to a negative emotion, and sensorial displeasure with the alarm clock, no matter how easy it is to use. Although it might be interesting to speculate on this effect in our experiment, it was not studied as a separate variable.

Soft Usability Problems: User Characteristics Compared to Product Property

The experiment conducted in this study has shown that a limited number of user characteristics influence performance of the operation tasks selected to be performed with the two electronic products used in this study. First, age influenced the time taken to perform the tasks and the ability to complete the tasks: the older the participant the more the time taken and the fewer the tasks completed. Second, gender only had an effect on performing the alarm clock tasks, and this study has indicated that female participants took more time. Thirdly, familiarity with electronic products and prior experience with these types of products had a positive effect on time taken and task completion. Finally, culture played a role with regard to the time needed to complete the tasks. This study has also shown that the origin of most usability problems is neither in isolated user characteristics nor in product properties, but rather in the interaction between the two. The following findings support this statement:

- ‘Locus of control,’ an important factor in consumer complaining behaviour and what is referred to as attribution of blame by Donoghue and De Klerk (2006), played a role in our study in the type of soft usability problems that were mentioned. Only for the alarm clock was it found that the weaker the internal locus of control a participant had – and hence the more the blame was attributed to the product or to others – the more operational problems were mentioned compared to sensory problems; and vice versa. This is an expected result because operational problems are more serious, and in this experiment are considered the main weaknesses of the product.

- ‘Proneness to complain,’ as measured through the questionnaire, affected the type of soft usability problems mentioned, but only significantly so for the alarm clock. The participants who were prone to complain were more dissatisfied with operational quality than with sensory quality; and vice versa.

- Cultural effects were only significant in the alarm clock: more sensory quality problems were expressed by the South Korean participants, and more operational quality problems were expressed by the Dutch participants. However, this was not observed with the MP3 player. This implies that culture could play an important role, especially in usability problems with simple products: the more complicated a product is, the more dependent the product is on human cognition rather than culture or individual differences. As described in the introduction, soft usability problems occur when there is a gap between users’ expectations and actual use experience. These expectations are rooted in the prior experience people have had with products. Because such prior experiences are influenced by local culture, soft usability problems cannot be independent from the cultural background of the user.

- Prior experience only influenced the type of soft usability problems expressed for the MP3 player. The participants with prior experience of the product in question were more likely to complain about operational quality of such a product type, but those with no prior experience complained more about sensory quality. This result is striking considering that the greater the prior experience a user has with related technology the quicker they can learn to use newer ones (Lewis, Langdon, & Clarkson, 2008), and interactions that exploit prior knowledge contribute to products being faster and easier to operate, and less prone to error (Blackler, 2008; Blackler, Popovic, & Mahar, 2010; Langdon, Lewis, & Clarkson, 2007; Lewis et al., 2008). A possible explanation is that people with more prior experience would have greater use fixation, especially in using complicated electronic products such as MP3 players. However, such high use fixation can easily lead to problems related to operational quality when they use an unfamiliar user interface. People are more likely to maintain their habitual way of use (Reason, 1990), and acquired use habits seem to limit flexibility when confronted with the unfamiliar. It has been shown that people who regularly use a particular type of coffee-cream container need more attempts to open a new type of container than less experienced users (Kanis, 1998). It is speculated that those who have more prior knowledge about a technology or interface may also be more equipped to raise use issues and even suggest improvements based on operational problems they have experienced before.

- Although, in general, older people have more experience than younger people, this is not necessarily so with electronic products such as MP3 players. It means that prior experience with these kinds of products is a better predictor of the performance of the product than age. A recent study also supports this assumption in which experience rather than age may be the best predictor of performance, and no significant generation-related differences were found in effectively using technological products (Lewis, Langdon, & Clarkson, 2007).

Soft Usability Problems: User Expectations Compared to Actual Use

In general, use problems occur when there is a discrepancy between prior expectations and actual use experience (Brombacher, Sander, Sonnemans, & Rouvroye, 2005; Karapanos & Martens, 2007; Koca, Karapanos, & Brombacher, 2009). In this study, it was found that user expectations as expressed by the participants before actual use of the two products were mostly related to the functional quality of the products. However, during actual usage of the two products, the experiences expressed by the participants only included sensory and operational problems. This might indicate that users, when asked for their expectations before actual use, generally focus on functional quality. However, when they actually use the product and experience difficulties that could not be caused by functional and/or technical limitations, they blame the product for their lack of sensory and operational qualities. The two products used in this study appeared to function normally in spite of the many problems participants experienced, which often led to failing to complete the tasks. Hence, it could be speculated that user tests should not focus on the functional qualities of electronic products but rather on the operational and sensory qualities. Think-aloud methods might be necessary to discover soft usability issues in terms of sensory and operational qualities in real time user studies.

Soft usability problems: Introspective versus retrospective evaluation

There are differences between the findings of the previous surveys (Kim & Christiaans, 2012; Kim et al., 2007) and those of the experiment carried out in this study, which we suspect have to do with differences in research method, i.e., between so-called actual use in user trials and retrospective evaluation based on long-term interactions. The participants in our user-trial-like experiment reported soft usability problems during or immediately after actual use, but in the previous surveys, the participants reported soft usability problems retrospectively. The frequency of functional quality problems highlights the difference between this study and the previous surveys, meaning that, contrary to the previous surveys, there were very few function-related problems mentioned by the participants in the experiment.

One explanation for the above might be the influence of time on experiencing usability. Criticizing current usability tests during which the ‘naïve’ user is learning about the product for the first time, Dillon (2002) emphasized the importance of stable estimates of long-term usability: data from current usability tests do not provide data about usability but about learnability, and such short-term interactions may not represent stable interaction. Such first time interactions are very likely to lead to sense-related impressions. According to this inference, and considering the characteristics of current usability tests, it is predictable that operational problems (from learnability) and sensory problems (from first impression) would dominate in an experiment employing the current usability test format. This could explain why the participants raised few problems related to functional quality in the experiment following the current usability test format.

Another explanation might be that the tasks given in the experiment focus on performing operations on the products in order to get them to work. This (operation) process-oriented attention might also explain that, ‘on the way,’ a number of sensory problems were met, which the participants kept in mind and expressed during the de-briefing sessions.

Implications of Soft Usability Problems and Follow-up User Behaviour

Soft usability problems do not always lead to product return but definitely have a negative influence on future purchase intentions. We expected that task completion or failure might influence this decision, but the results of this study show that this is not the case. The relatively low percentage of the participants who expressed that they would return the product (47 and 38% resp.) compared to the much higher percentage of the participants who would simply not buy that product again (around 70%) can be explained by the resistance of people to put in the effort to actually return the product.

Because the type of soft usability problems raised during actual use, demonstrated through direct observation studies, is partly different from the type of problems expressed in retrospect through survey studies, it may be concluded that companies should consider applying a combination of both research methods. Moreover, types of soft usability problems can be partly anticipated by defining both the product properties of a consumer electronic product and the characteristics of the users who use that particular product. To effectively reduce soft usability problems and increase user satisfaction with electronic consumer products, companies have to adopt a proactive approach to gain a better understanding of their products as well as their target users before launching their product into the market. It seems that most companies do not include usability as a factor (or do not consider it an important factor) in their financial risk analysis even though it turns out that the major reason for product return is because of usability problems. In this respect, changing the internal culture of companies with regard to having a critical view on and researching into the real factors involved in human-product interaction is desperately needed. Whereas there is an overload of usability methods and techniques, they are often beyond the concern of the project/design team. Through the study presented in this paper together with our previous studies, we have tried to clarify what ordinary people experience when interacting with everyday electronic products. The participants also shared with the authors their problems either in retrospect or during actual use of the products used in this study, the latter being the topic of this study—addressing the factors influencing usability problems.

Acknowledgements

The author gratefully acknowledge the support of the Innovation-Oriented Research Programme ‘Integrated Product Creation and Realization (IOP IPCR)’ of the Netherlands Ministry of Economic Affairs. Dr. Henri Christiaans is deeply acknowledged for giving me valuable comments and supports. I also express my gratitude to the reviewers for their constructive feedback.

References

- Blackler, A. (2008). Intuitive interaction with complex artefacts: Empirically-based research. Saarbrücken, Germany: VDM Verlag. Dr. Müller.

- Blackler, A., Popovic, V., & Mahar, D. (2010). Investigating users’ intuitive interaction with complex artefacts. Applied Ergonomics, 41(1), 72-92. doi: 10.1016/j.apergo.2009.04.010.

- Broadbridge, A., & Marshall, J. (1995). Consumer complaint behaviour: The case of electrical goods. International Journal of Retail & Distribution Management, 23(9), 8-18.

- Brombacher, A. C., Sander, P. C., Sonnemans, P. J. M., & Rouvroye, J. L. (2005). Managing product reliability in business processes ‘under pressure’. Reliability Engineering and System Safety, 88(2), 137-146.

- Christiaans, H., & Kim, C. (2009). “Soft” problems with consumer electronics and the influence of user characteristics. In M. Norell Bergendahl, M. Grimheden, L. Leifer, P. Skogstad, U. Lindemann (Ed.), Proceedings of the 17th International Conference on Engineering Design. Somerset, UK: the Design Society.

- Dantas, D. (2011). The role of design to transform product attributes on perceived quality. Paper presented at the 6th International Congress on Design Research, Lisbon, Portugal.

- den Ouden, E., Yuan, L., Sonnemans, P. J. M., & Brombacher, A. C. (2006). Quality and reliability problems from a consumer’s perspective: an increasing problem overlooked by businesses? Quality and Reliability Engineering International, 22(7), 821-838.

- Dillon, A. (2002). Beyond usability: Process, outcome and affect in human-computer interactions. Canadian Journal of Library and Information Science, 26(4), 57-69.

- Donoghue, S., & de Klerk, H. M. (2006). Dissatisfied consumers’ complaint behavior concerning product failure of major electrical household appliances—a conceptual framework. Journal of Family Ecology and Consumer Science, 34, 41-55.

- Goodman, J., Ward, D., & Broetzmann, S. (2002). It might not be your product. Quality Progress, 35(4), 73-78.

- Hofstede, G. (2003). Culture’s consequences: Comparing values, behaviors, institutions and organizations across nations (2 ed.). London: Sage Publications, Inc.

- Jordan, P. W. (1998). Human factors for pleasure in product use. Applied Ergonomics, 29(1), 25-33. doi: 10.1016/s0003-6870(97)00022-7.

- Kanis, H. (1998). Usage centred research for everyday product design. Applied Ergonomics, 29(1), 75-82.

- Karapanos, E., & Martens, J. -B. (2007). Characterizing the diversity in users’ perceptions. In C. Baranauskas, P. Palanque, J. Abascal & S. Barbosa (Eds.), Human-computer interaction – INTERACT 2007 (Vol. 4662, pp. 515-518). Berlin / Heidelberg, Germany: Springer.

- Kim, C., & Christiaans, H. (2009). Usability and ‘soft problems’: a conceptual framework tested in practice. Paper presented at the World Congress of Interntional Ergonomics Association, Beijing, China.

- Kim, C., & Christiaans, H. (2012). ‘Soft’ usability problems with consumer electronics: the interaction between user characteristics and usability. Journal of Design Research, 10(3), 223 - 238.

- Kim, C., Christiaans, H., & van Eijk, D. (2007). Soft problems in using consumer electronic products. In Proceedings of the 2rd World Conference on Design Research. Hong Kong, China: Interactional Association of Societies of Design Research.

- Koca, A., Karapanos, E., & Brombacher, A. (2009). ‘Broken expectations’ from a global business perspective. In D. R. Olsen, Jr., K. Hinckley, M. R. Morris, S. Hudson, & S. Greenberg (Eds.),Proceedings of the 27th International Conference on Human Factors in Computing Systems. New York, NY: ACM press.

- Langdon, P., Lewis, T., & Clarkson, J. (2007). The effects of prior experience on the use of consumer products. Universal Access in the Information Society, 6(2), 179-191. doi: 10.1007/s10209-007-0082-z.

- Law, E. L. -C., Roto, V., Hassenzahl, M., Vermeeren, A. P. O. S., & Kort, J. (2009). Understanding, scoping and defining user experience: a survey approach. In D. R. Olsen, Jr., K. Hinckley, M. R. Morris, S. Hudson, & S. Greenberg (Eds.), Proceedings of the 27th International Conference on Human Factors in Computing Systems. New York, NY: ACM press.

- Lawry, S., Popovic, V., & Blackler, A. (2009). Investigating familiarity in older adults to faciliate intuitive interaction. In Proceedings of the 3rd World Conference on Design Research. Seoul, Korea: Interactional Association of Societies of Design Research.

- Lawry, S., Popovic, V., & Blackler, A. (2010). Identifying familiarity in older and younger adults. In Proceedings of the International Conference of Design Research Society. Montréal.

- Lawry, S., Popovic, V., & Blackler, A. (2011). Diversity in product familiarity across younger and older adults. In Proceedings of the 4th World Conference on Design Research. Delft, the Netherlands: the International Association of Societies of Design Research.

- Lewis, T., Langdon, P., & Clarkson, P. (2007). Cognitive Aspects of Ageing and Product Interfaces: Interface Type In C. Stephanidis (Ed.), Universal acess in human Computer Interaction. Coping with Diversity, Lecture Notes in Computer Science (Vol. 4554, pp. 731-740). Berlin / Heidelberg, Germany: Springer.

- Lewis, T., Langdon, P., & Clarkson, P. (2008). Prior experience of domestic microwave cooker interfaces: A user study. In P. Langdon, J. Clarkson & P. Robinson (Eds.), Designing inclusive futures (pp. 95-106). London , UK: Springer.

- Madureira, O. M. (1991). Product standardization and industrial competitiveness. Paper presented at the International Conference on Standardization and Qulaity, Sao Paulo, Brazil.

- McCrae, R. R., & Costa Jr, P. T. (2004). A contemplated revision of the NEO five-factor inventory. Personality and Individual Differences, 36(3), 587-596. doi: 10.1016/s0191-8869(03)00118-1.

- Norman, D. (2004). Emotional design: Why we love (or hate) everyday things. New York, NY: ACM.

- Reason, J. (1990). Human error. New York, NY: Cambridge University Press.

- Söderholm, P. (2007). A system view of the no fault found (NFF) phenomenon. Reliability Engineering & System Safety, 92(1), 1-14. doi: 10.1016/j.ress.2005.11.004.

- Thiruvenkadam, G., Brombacher, A. C., Lu, Y., & den Ouden, E. (2008). Usability of consumer-related information sources for design improvement. In Proceedings of the Professional Communication Conference. New York, NY: IEEE International.