Product Sounds: Basic Concepts and Categories

Elif Özcan *, René van Egmond, and Jan J. Jacobs

Faculty of Industrial Design Engineering, Delft University of Technology, Delft, The Netherlands

In this paper we investigated which categories of product sounds listeners can distinguish. We explored the perceptual domain of product sounds in order to be able to derive perceptually similar categories and determine how users are able to describe these categories by merely listening to them. Employing two different experimental paradigms (free categorization and pairwise comparison), we determined six perceptually relevant product sound categories (air, alarm, cyclic, impact, liquid, mechanical) and nine basic concepts that mentally represent these categories (sound source, action, location, sound type, onomatopoeias, psychoacoustics, temporal descriptions, emotions, abstract meaning). The results indicate that these categories are not only a direct consequence of perceptual similarities in the spectral temporal structure but also result from similarities based on cognitive, emotional, and contextual evaluations of the sound. We conclude that sound is an intrinsic property of a product and, with the knowledge gathered in this study, designers will be able to relate the auditory property of a product to the overall product experience. In addition, this paper presents two methods for the perceptual evaluation of product sounds.

Keywords – Product Sounds, Sound Categories, Multi-sensory Design, Sound Design, Categorization.

Relevance to Design Practice – Product sound design (PSD) is an emerging topic within the field of product development. This study provides insights into the perceptual attributes of product sounds and their cognitive relevance from the users’ perspective and discusses the findings in relation to overall product experience and product (sound) design.

Citation: Özcan, E., van Egmond, R., & Jacobs, J. J. (2014). Product sounds: Basic concepts and categories. International Journal of Design, 8(3), 97-111.

Received January 23, 2013; Accepted May 25, 2014; Published December 31, 2014.

Copyright: © 2014 Özcan, van Egmond, and Jacobs. Copyright for this article is retained by the authors, with first publication rights granted to the International Journal of Design. All journal content, except where otherwise noted, is licensed under a Creative Commons Attribution-NonCommercial-NoDerivs 2.5 License. By virtue of their appearance in this open-access journal, articles are free to use, with proper attribution, in educational and other non-commercial settings.

*Corresponding Author: e.ozcan@tudelft.nl.

Dr. Elif Özcan is an Assistant Professor at the Faculty of Industrial Design Engineering of Delft University of Technology. She has published in several peer-reviewed international journals and has recently been a guest editor for the Journal of Sonic Studies’ special issue on Sound Design (2014). With her PhD on meaningful associations of products’ sounds, Özcan’s recent research focuses on the perceptual and cognitive processes underlying meaning attribution to product experiences. She is, and has been, involved in several commercial research projects (e.g., Toyota Motors Europe, European Space Agency) and European doctoral level research programs (Cognovo, a Marie Curie doctoral program on cognition and creativity, and docARTES - practice-based research in musical arts designed for musician-researchers).

Dr. René van Egmond is an Associate professor of Product Sound Design & Perception at the Faculty of Industrial Design Engineering. He leads a small but dedicated group in the field of product sound design. Van Egmond works with PhD students on two research lines: Interactive Audio Design and Embodied Audio Design. He has published in several peer reviewed international journals. In addition, he has conducted research for companies like Philips, P&G, and recently, Toyota, and for organizations like the European Space Agency.

Prof.ir. Jan Jacobs was a full-time professor in the field of applied aesthetics at the Faculty of Industrial Design Engineering of Delft University of Technology. In February 2012 he retired. Jan Jacobs was one of the first graduates of the Faculty in 1973. He worked for many years in industry as an Industrial Designer, winning many Design Awards. Since 1986 he was a full professor in applied aesthetics. His research interests focused on design history and on new areas of industrial design, such as design experience and sound design. For many years Jacobs was chairman of the Dutch Association of Industrial Designers (KIO) and a member of the Dutch Arts Council. His main focus in education was in supervising graduates. The nearly 400 graduation projects under his supervision were almost all in collaboration with companies all over the world, such as Volvo, BMW, Sony, Philips, General Electric and Johnson Controls.

Introduction

Design thinking predominantly operates in the visual realm. Designers are proficient at thinking in terms of shape, size, colour, and material and at creating preliminary versions of a non-existing product in the form of visual sketches, scaled models, and prototypes (Kavakli, 2001; Oxman, 2002; Purcell & Gero, 1998). The perceptual expertise of designers with visual product properties also determines the language used to present, discuss, and evaluate the product or its concept (Goldschmidt & Sever, 2011; Ulusoy, 1999; Wiegers, Langeveld, & Vergeest, 2011). Although visual imagery and visual reproduction are the primary activities of designers during product development, the user experience of a product is also determined by the more (unconscious) senses. The vocabulary of designers with respect to other sensory product properties is limited, although their contribution to the overall product experience is well acknowledged (Crilly, Moultrie, & Clarkson, 2004; Özcan & van Egmond, 2009; Schifferstein & Spence, 2008; Spence & Zampini, 2006). This paper primarily focuses on the auditory properties of a product and aims to provide designers with insights into the way product sounds1 are perceived and described by users.

Not much is known about product sounds from the human perspective. In practice, product sounds are often tackled within the fields of engineering and acoustics (Lyon, 2000; Susini et al., 2004; van Egmond, 2008) or within the context of interaction design (Franinović & Serafin, 2013; Frauenberger & Stockman, 2009; Hermann, Hunt, & Neuhoff, 2011; Lemaitre et al., 2009). Knowledge from these fields is necessary to understand how to construct a product such that it evokes a specific sound experience (e.g., sustainability), how to measure the product’s acoustical quality, or how to interact with objects that produce sounds. However, a systematic inventory of the experiential aspects of products sounds that could guide product developers is missing. Therefore, we have conducted studies to understand the categories of product sounds and the basic concepts that mentally represent these categories.

A second focus of this paper is on the methodology that can be used to study the perception of product sounds. Because product sound design is a relatively new field, the methods to evaluate sound from the perspective of users have not yet been established. There are still methodological concerns as to how to accurately and reliably capture (perceptual and meaningful) user experiences involving product sounds (see, Giordano, Susini, & Bresin, 2013 for an overview). The studies conducted and presented in this paper tackle this issue by comparing two different methodologies that serve a similar purpose, i.e., perceptual similarity and its relevance to conceptual association. In the following paragraphs we will first present the theoretical background for (product) sound perception and then explain our approach to the perceptual evaluation of product sounds.

Categorization and Similarity

The basis for any creative thinking is the ability of a designer to perceptually distinguish between various solutions to a design problem and to make these solutions conceptually relevant. The underlying cognitive function during such an activity is categorization. Muller (2001) suggests that in the process of product form creation, the categorization function is perpetually present when designers start conceptualizing their ideas, and when they compare their creations with existing product categories (i.e., prototypes) or to earlier solutions provided by themselves (i.e., sketches and models) and attempt to make the product context-relevant. Muller further proposes that categorization allows designers/users to distinguish a product on the basis of its form (solution-typical categorization), its function (prototypical categorization) and its usage/interaction (behaviour-typical categorization). Eventually, during the creative phases of product development, categorization helps designers to not only develop expertise in perceptual tasks but also helps them to communicate their ideas on a conceptual level.

In cognitive science, a category exists whenever two or more distinguishable objects are treated equivalently as a result of their similarity (Mervis & Rosch, 1981; Murphy, 2002; Rosch, 1978). How similarity constrains categorization is a complex process. Different individuals may spontaneously adopt different strategies for similarity judgments during categorization and an individual may apply different strategies on different trials (e.g., holistic, analytic, one-feature) (Eme & Marquer, 1998; Medin & Barsalou, 1987). According to Goldstone (1994), similarity is flexible (not necessarily based on perceptual input) and context dependent (contexts that are defined by the individual’s intentions, goals and past experiences or by a background set of related items). Thus, similarity integrates multiple sources of information; and formation of categories is therefore dependent on the type of correspondences and relational properties people find between the items in comparison.

The level of object information processing also influences categorization. Categories can occur as a result of sensory perception or generic knowledge (Medin & Barsalou, 1987). Sensory perception categories stem from the similarities in the perceptual features (e.g., colour, sound). Generic knowledge categories stem from the similarities in the conceptual knowledge (e.g., birds, cars) that is linked to meaningful semantic associations (e.g., birds have wings and they can fly). In addition, emotional responses elicited by objects and events can also be used as the basis for relating objects to one another (Isen & Daubman, 1984; Niedenthal, Halberstadt, & Innes-Ker, 1999). In summary, the sensory, cognitive, conceptual, and emotional processing of objects and events may result in the categorical organization of objects.

Auditory Categories

Sound is considered a multilayered phenomenon that is caused by an object and action in a location (e.g., a car passing in a parking lot), which evokes sensations (e.g., loudness, sharpness) and elicits emotions (e.g., pleasantness), that can be measured in acoustical terms (e.g., frequency, intensity), and has meaningful associations (e.g., a sportive car sound, adventure, safety). Therefore, auditory categories could reflect any of these dimensions. For example, Gaver (1993a, 1993b) has theoretically studied the physics of sound producing events (e.g., materials, actions, objects) and discerned the following categories within the environmental sound domain: impact (solids), scraping (solids), dripping (liquids), temporally complex events (interaction of solids and liquids), and machine sounds (complex events involving various sources). Gygi, Kidd, and Watson (2007) focused on acoustical similarity using a wide range of sound events (human and animal voices, sounds produced by human-object interaction, animals, vehicles, small daily objects, etc.) and determined dimensions such as harmonicity, modulation depth, continuity, and silence as the bases for their auditory categories. The occurring categories not only reflected acoustical variables (i.e., harmonic sounds, continuous sounds) but also events and interactions (i.e., discrete impact sounds, vocalizations, and non-vocalizations). The underlying perceptual dimensions determined by Bonebright (2001) were mostly characterized by psychoacoustical evaluations (dull/sharp, smooth/rough, pleasantness) but also by some acoustical variables (intensity, frequency, amplitude, dynamic range, and time).

Sounds can also be discriminated between as a result of the emotions they elicit. Sensory pleasantness was one of the factors that characterized similarity within sound categories in Bonebright’s study (2001). Bergman, Sköld, Västfjäll, and Fransson (2009) have suggested that part of the cognitive categorization of sound is mediated by emotional reactions to sound. Thus, emotions and sensory pleasantness can underlie some auditory categories.

Studies of Guastavino (2007) and Dubois, Guastavino, and Raimbault (2006) suggest that environmental sounds are meaningful entities in a social context to listeners with certain intentions. Similarly, from a cognitive perspective, auditory categories have been investigated through people’s observations and subjective sound descriptions (Porteous & Mastin, 1985), cognitive judgments (Ballas, 1993; Dubois, 2000), and the first conceptual representation that is activated upon auditory perception (Marcell, Borella, Greene, Kerr, & Rogers, 2000). Consequently, in these studies sound categories reflected varying degrees of common conceptual knowledge (e.g., nature, bathroom, water, door, indicator / signaling). In addition, some of the cognitive categories were explained by common acoustical features (e.g., sounds of continuous modulated noise, sounds with discrete transient components).

The afore-mentioned literature also suggests that cognitive categories can be grounded in perceptual similarity. There is an inherent relationship between the acoustical constitution of a sound and its effect on identifiability (Gaver, 1993a; Gygi, Kidd, & Watson, 2004). That is, similar events / sound sources may produce perceptually similar sounds (e.g., shavers and hair-trimmers). Thus, perceptual similarity can be an indicator of conceptual similarity. However, the opposite may not be true: members of auditory categories may often not share common acoustical features, if the sounds are categorized only based on cognitive judgments (e.g., digital and old-fashioned alarm clock sounds).

Concepts and Semantic Knowledge

Concepts are meaningful units that bridge multi-sensory information and corresponding semantic knowledge (teLinde, 1983; Paivio, 1991). Thus, a concept holistically represents a body of knowledge that originates from sensory and semantic systems. Concepts and categories are often treated the same. However, a concept is considered as the mental representation of an object or a class of similar objects; whereas a category is the class itself (Murphy, 2002). Thus, a concept (e.g., a sports car) or knowledge pertaining to a concept (e.g., driving, high-way, sportive, exciting, loud) can combine several different classes of objects/events to become the underlying factor for categorization (e.g., Formula 1, Ferrari). Not all concepts refer to concrete objects; concepts can also represent abstract notions, which are implicitly experienced (e.g., adventure, freedom) or emotions (e.g., love, pleasantness) (Murphy, 1996; Lakoff & Johnson, 1980).

In the light of the above literature, we can conclude that categories are functional and systematic units, whereas concepts are the knowledge structures that give meaning to categories. Therefore, conceptual associations which occur will always depend on the characterizing features of a category (e.g., sensory properties, emotion, objects, contexts, events). For product sounds, it is necessary to determine basic concepts that make these sounds meaningful to both users and designers. One way to determine them is to understand the semantic associations listeners have with them.

Semantics of Auditory Concepts

Cognitive studies with environmental sounds reveal that the name of the sound event (i.e., sound source in action) is the most frequently occurring sound description (e.g., car passing). The explanation for this comes from Yost (1991) that when listeners hear a sound, they also are able to (visually) imagine its source in action (i.e., sound event), suggesting a strong link between the sound and its originator. Conceptual associations are activated earlier than the names of objects (Cummings et al., 2006; McCauley, Parmelee, Sperber, & Carr, 1980; see also Cleary, 2002). According to Vanderveer (1979) these associations might be limited to the perceptual structure of a sound (e.g., spectral-temporal content). In addition, environmental sounds become meaningful because they elicit sensory reactions and emotions in listeners (von Bismarck, 1974; Björk, 1985; Edworthy, Hellier, & Hards, 1995). Consequently, listeners may judge sounds on the basis of psychoacoustical reactions (e.g., sharpness, loudness, dullness) and emotional responses (e.g., tense, unpleasant, obtrusive). Ballas (1993) also revealed that environmental sounds are time and context related entities. Fabiani, Kazmerski, Cycowicz, and Friedman (1996) have categorized generic sound descriptions as not-known (e.g., noise), sound imitation (e.g., too-too-too), acoustic description (e.g., high-pitched), and name or compound name (e.g., bird, water drain bubbles). In their study and in Marcell et al. (2000), it was shown that there are alternative ways of describing a sound indicating that a sound can evoke more than one conceptual association.

Perceptual Evaluation of Sounds

Giordano et al. (2013) have extensively described methods that are used for the perceptual evaluation of sound-producing objects. For example, semantic differentials, identification and categorization, scaling and rating, and dissimilarity estimation were mentioned among those methods. Considering the needs in the field of product development, it is important to establish the relationship between perceptual (i.e., acoustical) and conceptual (i.e., cognitive, semantic) similarity and how such distinct similarities underlie product sound categories. The reason for this is that designers consider product sounds as entities to be manipulated and such manipulations have an effect on both the acoustical constitution of the sound and its conceptual associations. Furthermore, the manipulations are to be evaluated by a panel of listeners (a.k.a., users). For this purpose, semantic differentials and/or Likert scales are used as a common methodology to capture experiences with product sounds. With these methods, essentially, sound designers aim to link the acoustical constitution of sounds to their semantic associations via a preselected set of words reflecting the type of experience to be measured. By doing so, they can compare all the manipulations of product sounds created for the desired auditory experience, or they can benchmark the sounds they created in comparison to the sounds of other similar products in the market. For example, car companies widely use these methods to understand the acoustic quality of their engine sounds (Bisping, 1997; Chouard & Hempel, 1999; Letens, 2000). These methods have also been used, in a more general context, to understand the psychoacoustical limits of human auditory perception (e.g., von Bismarck, 1974, Kendall & Carterette, 1993; Kidd & Watson, 2003), the basic semantics of product sounds (Özcan & van Egmond, 2012) and emotional responses to environmental sounds (e.g., Björk, 1985). Semantic differentials and Likert scales could be used as a reliable method for measuring auditory experiences.

However, Likert scales or semantic differentials as methodologies have their own limitations if the aim is more than simply capturing the desired experiences for a particular product (see also, Bonebright & Flowers, 2011, for the perceptual and cognitive limitations during sound evaluation). That is, these methods might be ineffective for defining the perceptual domain of product sounds mainly because the sounds are evaluated on their own without comparison. The listeners in an experimental condition would not be aware of the full extent of the rating scale simply because while the previous sounds set the limits of the rating scale, the following sound may be surprisingly experienced off the limits. Thus, the ratings provided for the entire set of sounds may not provide reliable results for defining the borders of auditory perception for the purpose of obtaining sound categories. Because the main aim of this paper is to provide designers with perceptually relevant categories of product sounds (and underlying concepts), we are interested to employ methods that will force the listeners to directly compare the similarity of the product sounds.

Two main experimental paradigms are commonly used to determine auditory similarity and consequently sound categories. These paradigms are pair-wise comparison and (free) categorization. In a pairwise comparison task, listeners hear two sequentially presented sounds and indicate how similar they are on a Likert scale. In a (free) categorization task listeners are able to compare several sounds, freely sort the ones they find similar, and rate the internal consistency of the category members. The type of experimental paradigm used to determine categories may moderate the type of similarity identified in judgments of environmental sounds (Aldrich, Hellier, & Edworthy, 2009; Gygi et al., 2007; Bonebright, 2001). Aldrich et al. (2009) argued that despite correlations in the resulting categories from both paradigms, similarity judgments in a pairwise comparison task resulted in acoustical similarity, whereas a free categorization task required conceptual analysis of the sound. Nevertheless, if the signal-referent relationship is strong, then conceptual and perceptual categories should resemble each other.

This Study

For the current study, we employed both paradigms (i.e., free categorization and pairwise comparison) in two consecutive experiments. In Experiment 1, we used the free categorization paradigm with a set of product sounds. Participants were asked to freely categorize all the presented sounds into categories which they created themselves (i.e., free categorization). A sound could only belong to one category. Participants could create as many categories as possible; they also labeled the categories according to the common associations all the sounds in a category had. The free categorization paradigm allowed us to determine categories within the domain of product sounds and the conceptual associations of these categories. In Experiment 2, we used the pairwise comparison paradigm with a set of product sounds representing the categories determined in the previous experiment. Participants judged one pair of sounds at a time and rated the similarity of the sounds. Pairs had either similar sounds from the same sound category or dissimilar sounds from different categories. After rating all the pairs, participants indicated which strategy they used the most for comparing the similarity of the paired sounds. The strategies were drawn from the conceptual associations determined in the previous experiment.

Experiment 1—Categorization of Product Sounds

In Experiment 1 a free categorization paradigm was employed to determine product sound categories and their underlying (acoustical) dimensions and concepts. The three objectives of this experiment were to determine (1) the domain of domestic product sounds together with the constituting categories, (2) the acoustical / psychoacoustical dimensions that underlie this domain, and (3) the conceptual structure of the categories.

Method

Participants

Twenty-eight students and employees of Delft University of Technology volunteered. The average age of the participants was 27.5 years. All participants reported normal hearing.

Stimuli

Thirty-two domestic product sounds were selected from various sound effect CDs. The selected sounds represented a wide range of products sounds that can be heard on a daily basis when people physically interact with products in domestic environments. For example, shavers, hairdryers, microwave ovens, doors of washing machines, alarm clocks and coffee machines emit sounds when people use these products. Thus, such sounds inspired the selection criteria for the experiment. The selected sounds were edited on a Macintosh PowerPC G4 computer using the sound-editing program Sound Studio. Sounds longer than 5 seconds were trimmed to a maximum duration of 5 seconds. Sounds that were shorter than 5 seconds were not changed in duration. All sounds were saved in a stereo format with a sampling rate of 44.1 kHz and 16 bits. The loudness levels were adjusted to a comfortable listening level for each sound. The participants were not allowed to change the sound levels during the experiment.

Apparatus

The stimuli were presented using a specially designed software program developed with the Trolltech Qt (Mac OS X–free edition) tool kit. The program ran on a Macintosh Power PC G4 computer with a 1s7” Iiyama Pro454 monitor. Apple Pro Speakers with a frequency range of 70Hz - 20kHz and a maximum power of 10 Watts per channel were used to present the stimuli. The study took place in a quiet room.

Procedure

Before the study started, each participant received a brief explanation about the purpose of the study. A free categorization paradigm was used. That is, a participant’s task was to freely group the similar sounds.

Prior to the actual experimental session, a participant took a training session with animal sounds and human voices. The tasks in the training session were identical to the experiment; only the stimuli differed. In the experimental session, the sounds were presented as movable and listenable objects on the computer screen. A sound object remained dimmed until a participant listened to the sound. For every participant the sounds were presented with random numbering. A participant had to listen to all the sounds and freely group them on the screen. All the sounds had to be categorized individually into one category. After a participant heard all the sounds, s/he could advance to the next stage. In this stage, a participant had to create boxes in which the previously defined groups could be dragged. Each created box (i.e., sound group) had to be labeled by a participant. This label had to reflect how they would describe the group. Note that no instruction was given about what type of label a listener had to give (e.g., source, interaction event, or emotional experience). A participant set the degree of fit on a 7-point scale (how well do the sounds fit together?) for each group. A participant received a warning on the screen for each step s/he failed to progress beyond.

Results

The minimum number of categories created was two and the maximum number of categories was nine in the grouping task. Sixteen participants created five categories. The mean for the category fit ratings was 4.98 and differed significantly from the middle-point of the scale (t(27) = 6.96, p < .001). This indicated that on average the participants were satisfied with their groups. The Proxscal procedure of MDS (SPSS) was used to analyze the individual participant categories. The categories (consisting of the product sounds) were entered as the variables and each participant as a source. The Proxscal transformed the counts into proximities using a Chi-square distance measure (which is a normal procedure when determining relationships between frequency data) between the cases (i.e., the sounds). An Identity scaling model was used with an ordinal (untie tied observations) proximity transformation for each source separately. The Identity scaling model ensures that all sources (participants) have the same configuration. Employing a scaling model that weighs each source (participant) showed no strong individual effects. A 2-dimensional solution was chosen because a 3-dimensional solution resulted in only a minor decrease in Stress-I values from .08 to .07. The Dispersion Accounted For and Tucker’s coefficient of congruence were .993 and .996 respectively. These measures indicate that it is a good solution.

To be able to interpret the dimensions, the sounds were analyzed on their (psycho)acoustical features (sharpness, spectral centroid, harmonicity, SPL, and 39 critical band levels). These measurements were used in a factor analysis (Principle Component Analysis – PCA) that resulted in two factors, which characterize the (psycho)acoustical space of product sounds. Factor 1 was interpreted as a combination of sharpness and loudness and Factor 2 was interpreted as a combination of noisiness and low frequencies. Details of the (psycho)acoustical analysis and factor analysis can be found in the Appendix.

As has been explained in the Appendix the (psycho)acoustical measures for very short sounds could not be determined because of their duration. Therefore, an additional MDS analysis on the grouping data was performed on the data excluding these sounds. This was done to be able to interpret the categorization dimensions in terms of (psycho)acoustical measures. The MDS analysis again resulted in two dimensions with a Stress-I value of .11. These dimensions had a high correlation with categorical Dimensions 1 and 2 resulting from the analysis containing all sounds, r(19) = .96, p < .0001 and r(19) = .95, p < .0001, respectively. The MDS analysis without the short sounds corresponds to the MDS analysis including these sounds. Therefore, the solution is stable and we can interpret the dimensions by associating them with the results of the factor analysis described in the Appendix.

The regression weights stemming from the factor analysis (see Appendix) on the (psycho)acoustical measures were correlated with the two dimensions from the MDS analysis that did not contain the short sounds. Dimension-1 had a low correlation with Factor 1 (r(19) = -.02, p = NS) and a high correlation with Factor 2 (r (19) =.87, p < .0001). Dimension-2 had a higher correlation with Factor 1 (r (19) = -.34, p = NS) than with Factor 2 (r (19) = -.11, p = NS). Thus, high values on Dimension-1 are associated with low frequencies and a higher level of noise (because Harmonicity loads negatively on Factor 1) and high values on Dimension-2 are associated with higher levels of sharpness and loudness.

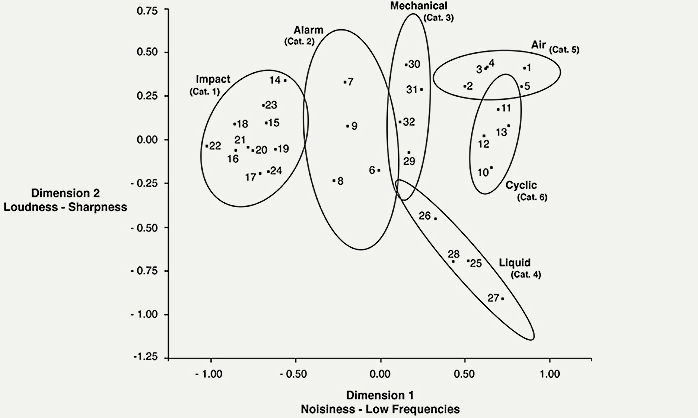

In Figure 1, the two dimensions of the MDS analysis are shown. Numbers in the figure indicate the product sounds presented in Table A1 in the Appendix. As can be seen in this figure certain sounds seem to be grouped together that may reflect specific product sound categories. In order to determine these categories a hierarchical cluster analysis using Ward’s method was conducted on the 2-dimensional coordinates of the MDS. This yielded six relevant clusters (product sound categories). Each category contained at least four sounds. In Figure 1 the categories are indicated by density ellipses (p < .95) encircling the sounds.

Figure 1. Product sound categories and their underlying dimensions. Dimension 1 represents Noisiness and Low-frequencies and Dimension 2 represents Loudness and Sharpness. (Numbers in the ellipses refer to the numbers that represent the product sounds in the Appendix; text in parenthesis refers to the category numbers in the text).

Category 1 contains short duration sounds caused by an impact between product parts (e.g., door closing). The sounds have a pulse-like character that on theoretical grounds (FFT) will result in a wide spectrum and also high frequencies. These sounds are positioned relatively low on Dimension 1, which means they will evoke a sense of sharpness. Category 2 contains mostly digitally produced alarm-like sounds. In Figure 1, it can be seen that these sounds are positioned relatively low on Dimension 1, which means they are not noisy and can evoke a sense of sharpness. Category 3 contains an old-fashioned alarm clock bell, a phonograph winding, a shaver, and an electric toothbrush sound. The products in this cluster are rather small in size. These sounds are the consequences of engines with high rpm, as well as small rotating and rubbing mechanical product parts. The sounds are positioned at the mid-point of Dimension 1, which means they have some noisiness in their spectral content, and are positioned relatively high on Dimension 2, which means they are sharp and loud sounds.

Category 4 contains coffee machine sounds (coffee boiling, water heating, water pouring) and an electric kettle (water boiling) sound. The products in this category contain liquids. These sounds are mostly caused by the heating of liquids. The sounds are positioned relatively high on Dimension 1, which means they contain low-frequencies in their spectral content and are somewhat noisy, and are positioned the lowest on Dimension 2, which means they are rather quiet and not sharp sounds. Category 5 contains the sounds of a vacuum cleaner, hand vacuum cleaner, washing machine, washing machine centrifuge cycle, and hair dryer sounds. These products are highly involved with air interaction due to the rotating fans used to blow or suck air. The sounds are positioned at the highest level on Dimension 1, which means they are noisy and consist of low frequencies, and at the highest level on Dimension 2, which means they are relatively sharp and loud sounds. Category 6 contains the sounds of a microwave oven, kitchen hood, dishwasher, and tumble-dryer. These products employ rotating parts which cause a cyclic event and produce a periodicity in the sound as a result. Some of the products in this category employ liquids. The sounds in this category are positioned relatively high on Dimension 1, which means they are rather low and noisy sounds, and are positioned lower than Category 5 on Dimension 2, which means they are less loud and contain lower frequencies.

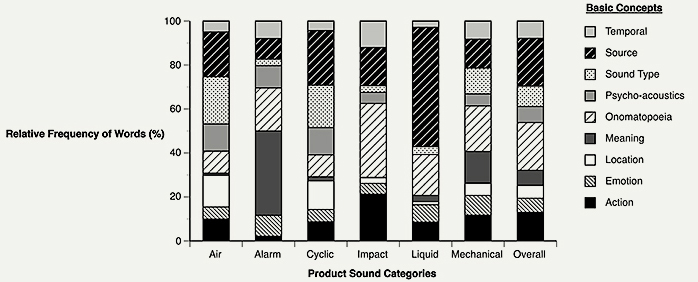

Category Labels & Basic Concepts

In order to understand what the categories conceptually meant to participants, we analyzed the labels participants provided for each category they had created. First, these labels are classified into basic concepts. If a label consisted of more than one word, it was split up into meaningful sections (e.g., unpleasant mechanical sounds as unpleasant and mechanical). The resulting words were analyzed to determine conspicuous patterns in product sound category labels. For example, words such as shaver, hairdryer, coffee machine were grouped as one concept (i.e., source), and other words such as rotating, blowing, cleaning were grouped as another concept (i.e., action). These patterns were found by iteratively going through all the labels participants provided and by grouping a single label into one single concept. For the analysis, it was important that all the different labels in one group would coherently denote one concept. This analysis produced nine different concepts: action, emotion, location, meaning, onomatopoeia, psychoacoustics, sound type (i.e., the means by which the sound is produced), source, and temporal. Finally, each single extracted label was scored as 1 if the label corresponded to any of the pre-defined concepts. For example, the words unpleasant and mechanical sound were rated as 1 respectively in the emotion and sound type concepts. Figure 2 presents the relative frequency of words as a function of basic concepts and product sound categories. In this figure, the bar indicated with overall represents the relative frequency of words for all product sound categories. This overall measure shows that source descriptions and onomatopoeias are the most frequently used concepts (21.69%), followed by action (12.98%) and sound type (9.29%) concepts.

Figure 2. Relative frequency of words as a function of basic concepts and of product sound categories (The cumulative percentage over descriptive groups add up to 100%).

Secondly, the occurring concepts were analyzed to see how frequently these concepts are used to describe individual sound categories. For the individual product sound categories, Category 1 was mostly described by onomatopoeias (click, bang), action (opening, impact), sound source (switch, door), and temporal (short) descriptions. Because the descriptions indicate impacting events, this category was called impact sounds. Category 2 was mostly described by meaning (warning, attention), onomatopoeia (buzzer, beep), psychoacoustics (loud, high-pitched), and emotion descriptions (obtrusive, annoying). Because the majority of the descriptions indicated an alarming situation, this category was named alarm sounds. Category 3 was represented by multiple concepts such as by onomatopoeias (rattling), meaningful associations (hygiene), sound source (shaver, toothbrush), sound type (mechanical), and action (rotating, adjusting) descriptions. Because the descriptions indicate mechanism related events and products that involve mechanical structures, this category was called mechanical sounds. Category 4 was mostly described by source (water, coffee), onomatopoeia (bubbling, hissing), action (pouring, filling), and emotion (pleasant) descriptions. Because the descriptions indicate liquid related events, this cluster was named liquid sounds. Category 5 was mostly described by sound type (air, aerodynamic), sound source (hair dryer, vacuum cleaner), and location descriptions (bathroom, bedroom). Because all these descriptions indicate events and products related to air, this category was named air sounds. Category 6 was mostly described by sound source (dishwasher, microwave oven), sound type (air, liquid), psychoacoustics (low-pitched, soft), and location (launderette, kitchen) descriptions. These descriptions resemble the descriptions of the sounds in Category 5. However, these sounds can be distinguished from them by their fluctuating temporal properties which indicate a rotating event. Therefore, this category was named cyclic sounds.

Discussion

Six categories have emerged within the domain of product sounds. These product sound categories are identified as air, alarm, cyclic, impact, liquid, and mechanical. The occurring product sound categories resemble the categories proposed for the environmental sound domain such as air, liquid / water, impacting solids, complex events such as machines, and signaling sounds (Ballas, 1993; Gaver, 1993a; Gaver, 1993b).

The acoustical correlates of product sound similarity indicate that product sound categories are differentiated by the amount of noise and low-frequencies in their spectral structure. Categories such as impact and alarm sounds are not noisy; however, mechanical, air and cyclic sounds contain lower frequencies and noisiness in their structure. Within a product sound category, it is the perceived sharpness and loudness that mainly distinguishes between the category members. The sharpness–loudness dimension also distinguishes between air, cyclic, and liquid sounds. In general, the occurring acoustical dimensions indicate that product sounds are characterized by a large frequency range with high-intensity and are therefore perceived as loud, sharp, and noisy. However, there is a distinction among categories. Electric-powered products that cause air, cyclic, and mechanical sounds have a larger frequency range than impact and alarm sounds, which are perceived to be less noisy and sharp. Liquid sounds are the only sound category that is not perceived as loud and sharp and alarm,

(Psycho)acoustical differentiation of the product sounds is not sufficient to understand conceptual differentiation. The results of the labeling task provided us with some insights into how sounds are conceptually represented. Nine different basic concepts were derived from the category labels: action, emotion, location, meaning, onomatopoeia, psychoacoustics, sound type, source, and temporal. Conceptually, listeners’ strategies in finding similarities among product sounds appear to be based mainly on sound source and secondly on perceived auditory similarity (i.e., onomatopoeias). However, certain concepts seem to be prominent for only certain categories. For example, impact sounds mainly elicit action and temporal concepts; air and cyclic are differentiated better by location related concepts.

Both the results of the (psycho)acoustical analysis and the labeling task on product sounds indicate that there are perceptually relevant product sound categories. Categories seem to be well-distinguished both on (psycho)acoustical and conceptual grounds. It appears that the use of free categorization paradigms mainly produced categories resulting from both cognitive (i.e., source description) and acoustical analyses (i.e., onomatopoeias). Thus, listeners may be using alternating strategies to find similarity among product sounds, and the shift in strategies may depend on whether or not the source information is available.

Experiment 2—Constituents of Similarity Judgments

Employing a pairwise comparison paradigm, in Experiment 2 we further explored the underlying processes in similarity judgments. The two objectives of this experiment were to (1) see whether the occurring categories in Experiment 1 are robust against a different paradigm and (2) to determine the main strategies used for product sound categorization. The nine concepts from Experiment 1 were used in Experiment 2 as possible strategies for similarity judgments.

Because the main objective of this experiment was to double-check whether the category borders would remain the same, the product sound categories (namely, impact and alarm sounds) that are obviously dissimilar both in acoustical content and sound sources were excluded from the pairwise comparison trials in Experiment 2.

Method

Participants

Eighty students of the Delft University of Technology participated. The average age of the participants was 22 years. All participants reported normal hearing. Students voluntarily participated and were paid.

Stimuli

Nineteen product sounds that shared high acoustical similarities were selected to represent four of the six product sound categories defined in Experiment 1. These categories were air, cyclic, liquid, and mechanical sounds. The sounds were recordings of various electrical domestic appliances in operation. Half of the sounds were identical to those of Experiment 1 and the other half were recorded in home conditions by using a recording apparatus, Boss BR-532, with a Sennheiser e865 microphone with a frequency response of 40Hz – 20kHz and free-field sensitivity of 3mV/Pa. The sounds were edited on a Macintosh PowerPC G4 computer using the sound-editing program Sound Studio. Sounds longer than 5 seconds were trimmed to a maximum duration of 5 seconds and saved in a stereo format with a sampling rate of 44.1 kHz and 16 bits. The loudness levels were adjusted to a comfortable listening level for each sound. The participants were not allowed to change the sound levels during the experiment.

Apparatus

The stimuli and the descriptive words were presented by a specially designed software application on a Macintosh PowerBook G4 computer with a 12” screen through Sony MDR-CD550 headphones. The experiment took place in a quiet room.

3.1.4. Procedure

Before the study started, each participant received a brief explanation about the purpose of the study. The experiment had two phases. In the first phase, experienced similarities were rated based on a pairwise comparison task; in the second phase, the most frequently used strategies (product sound related basic concepts) were rated on a questionnaire. Participants were not told about the second phase of the experiment beforehand.

For the similarity judgment task (i.e., pairwise comparison), pairs of products sounds were prepared. For that, sounds were divided into two: similar sounds and dissimilar sounds. Similar sounds belong to one single sound category defined in Experiment 1 (e.g., a shaver and a toothbrush sound would be similar sounds because they both belong to the Mechanical sound category) and dissimilar sounds belong to two different sound categories defined in Experiment 1 (e.g., a shaver sound belongs to the Mechanical sound category and a hairdryer sound belongs to the Air sound category). All the combinations of the similar and dissimilar sounds—repetition was not allowed, presentation order was not important, and the two sounds were randomly drawn—were created using the formula n! / (n-1)!r! [n = number of all sounds (19) and r = sounds chosen for pairing (2)]. The result was 171 pairs of sounds. Because the rating of 171 pairs takes about one hour to complete and such long-term concentration would hinder the perceptual capacity of the participants, only 48 sound pairs out of 171 pairs were randomly selected for the similarity judgment task. Participants took an average 15 minutes to make their judgments. Each pair was presented 20 times across the participants using a Monte Carlo method (Press, Teukolsky, Vetterling, & Flannery, 1995). A participant received the sound pairs on a screen as two different sound buttons on which “sound 1” and “sound 2” were written. The order of the sound pairs and the order between the sounds in a pair were randomized for each participant. Then, a participant listened to each of the sounds and rated their similarity on a 6-point scale (1–very dissimilar, 6–very similar). This was repeated for all 48 sound pairs.

A questionnaire followed the similarity judgment task asking “on what basis have you found similarities between the sound pairs?” and listing the following nine product sound related basic concepts: action, emotion, location, meaning, onomatopoeia, psychoacoustics, sound type, source, and temporal. Next to each basic concept a couple of examples were provided to facilitate the participants’ decision (e.g., for temporal aspects continuous, repetitive, multiple, single, constant). A participant indicated how frequently (1 indicating never, 5 indicating always) they found a similarity between the sound pairs on a 5-point scale allocated for each of the basic concepts.

Results

Similarity Judgment

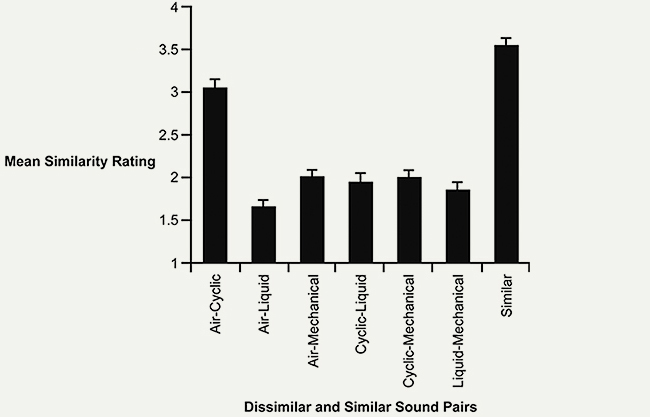

It was first determined if the sounds in the pair belonged to the same sound category or to different sound categories. The sounds that belonged to the same sound category were labeled as similar (e.g., a shaver and a toothbrush sound both belong to the mechanical sound category, therefore they are similar). The sounds that belonged to two different sound categories were labeled as dissimilar (e.g., a shaver and a hairdryer sound respectively belong to the mechanical sound category and the air sound category, therefore they are dissimilar). Thus, the similarity ratings were averaged over the similar and dissimilar sound pairs. Similar sound pairs (M = 3.55, SE = .07) were rated significantly higher than dissimilar sound pairs (M = 2.10, SE = .09), F(1, 78) = 481.91, p < .001.

An additional analysis was conducted in which the dissimilar sound pairs were differentiated by their respective sound categories. The following seven pairs (dissimilar and similar) resulted: air–cyclic, air–liquid, air–mechanical, cyclic–liquid, cyclic–mechanical, liquid–mechanical, and similar (e.g., air–air or mechanical–mechanical). For example, if the pair consisted of a shaver (mechanical sound) and a hair dryer sound (air sound), the label mechanical–air was assigned to this dissimilar sound pair; or if the pair consisted of a shaver sound and toothbrush sound (both mechanical sounds) the label mechanical–mechanical was assigned to the similar sound pair. This was done for all possible combinations. The sound pairs that contained the same sound categories but differed in the order of sound presentation (e.g., air–mechanical vs. mechanical–air) were treated equally. The sound pairs that contained sounds from one sound category (e.g., mechanical–mechanical) were labeled as similar. The similarity ratings were averaged for each dissimilar sound pair (air–cyclic, air–liquid, air–mechanical, cyclic–liquid, cyclic–mechanical, liquid–mechanical) and the similar sound pairs (air–air, liquid–liquid, etc.).

Figure 3 presents the mean similarity ratings as a function of the dissimilar sound pairs and similar sound pairs. According to the figure, the similar sound pairs had the highest similarity rating (3.55). Among the dissimilar sound pairs, the air–cyclic sound pair had the highest similarity rating (3.06) followed by air-mechanical (2.01), cyclic–mechanical (2.00) and cyclic–liquid (1.95). The air–liquid sound pair had the lowest similarity rating (1.66) followed by the liquid-mechanical sound pair (1.86).

Figure 3. Mean similarity ratings of sound pairs of dissimilar and similar sound categories.

The averaged similarity ratings per participant were analyzed by an ANOVA with the same similarity type as the within-subjects factors (7 levels). A significant effect was found for the similarity type, F(6, 468) = 147.58, p < .001. A planned comparison (using LSD) was conducted to determine which sound pairs differed significantly. Similar sound pairs differed significantly from the dissimilar sound pairs (p < .001). Air–cyclic sound pairs differed significantly from other dissimilar sound pairs (p < .001). In addition, air-liquid differed significantly from cyclic-liquid (p < .05) and differed significantly from air–cyclic, air–mechanical, cyclic–liquid, and cyclic–mechanical (p < .001).

Strategy Ratings

The strategy ratings were averaged over nine concepts. The mean values for the strategy ratings are: psychoacoustics (M = 3.87, SE = .13), action descriptions (M = 3.65, SE = .13), onomatopoeias (M = 3.65, SE = .14), sound source descriptions (M = 3.11, SE = .16), sound type (M = 3.06, SE = .15), temporal descriptions (M = 3.03, SE = .15), emotion (M = 2.62, SE = .15), abstract meaning (M = 2.10, SE = .14), and location descriptions (M = 2.06, SE = .13).

The strategy rating data were further analyzed using a hierarchical cluster analysis (using Ward’s method). The analysis yielded 4 clusters: (1) Action, Source, and Sound Type; (2) Onomatopoeia, Psychoacoustics, and Temporal; (3) Emotion; and (4) Location and Meaning.

Discussion

The pairwise comparison paradigm resulted in high similarities within a product sound category and dissimilarities between different product sound categories. Only air and cyclic sounds are judged as similar, which was also the case in Experiment 1, as the category borders of these sound categories were observed to overlap (see Figure 1). Therefore, we can conclude that the product sound categories determined in Experiment 1 are robust against a different paradigm.

Slight differences have been observed with respect to the strategies used for similarity judgments in Experiment 2. Psychoacoustics being the highest rated strategy confirms the literature (Aldrich et al., 2009; Gygi et al., 2007) that pairwise comparison is more likely to produce acoustical similarity. However, occurrence of four distinct types of similarity judgments further suggests that listeners adjust their strategies depending on the sound pairs.. Perhaps if the sound pair is recognized and (partially) identified, then listeners may base the similarity on a cognitive level. Consequently, source, action and sound type concepts become activated and can be used to make judgments. However, in the case when listeners do not recognize the sound pairs, listeners may refer to the available spectral-temporal structure of the sounds and base their similarity judgments on a perceptual level. Consequently, information on auditory features (e.g., how repetitive/sharp the sounds are) will always be available for the sounds to be judged on.

Similarity judgments also occur on an emotional level if the emotional responses are more salient than any activated perceptual or cognitive representations. Emotional judgments, however, may be a result of the perceptual or cognitive processing of the sound (Ortony, Clore, & Collins, 1988; van Egmond, 2004; van Egmond, Desmet, & van der Helm, 2005; Västfjäll, Kleiner, & Gärling, 2003). These results do not indicate at which level emotional judgments have occurred. For example, the sound of an epilator may be judged unpleasant because it is rough and loud or because of the knowledge that the product itself causes pain when in use. Finally, it appears that product sounds are meaningful entities within a certain context and meanings attributed to them may go beyond sound source information.

General Discussion

The practice of product sound design has been lacking essential knowledge regarding how product sounds are mentally represented. Our research has provided strong hints that product sounds are meaningful entities, and have conceptual representations; and that the activation of these concepts depends on the sound type. With this explorative study, we have discerned six perceptually distinct categories of product sounds (air, alarm, cyclic, impact, liquid, mechanical) and determined nine basic concepts (sound source, action, location, sound type, onomatopoeias, psychoacoustics, temporal descriptions, emotions, abstract meaning) that underlie the conceptual network for the sound categories. Furthermore, we have found that the formation of these categories are not only a direct consequence of perceptual similarities in the spectral temporal structure but also of similarities based on cognitive, emotional, and contextual evaluations of the sound.

As expected, our findings are that perceptions of similarity are flexible and context dependent (Goldstone, 1994) and that several perceptual and cognitive mechanisms perform a categorization function (Eme & Marquer, 1998; Medin & Barsalou, 1987). Considering people’s responses to environmental sounds, it was also expected that sound source/events would be the prevailing concept in the conceptual network of product sounds. However, perceptual judgment of product sounds seems to occur as frequently as determining the sound source. Thus, two main operations may take place: the processing of product sounds can remain at the sensory level or can lead to a perception of an object with conceptual associations. This study cannot explain when and how these processes take place. However, an interpretation can be made similar to Vanderveer’s (1979). That is, if a sound is identified, source information becomes important and if no identification occurs, then the spectral-temporal structure of the sounds is available for describing the auditory perception.

Practical Implications

With this study we have opened new avenues for design thinking by demonstrating that sound is an intrinsic property of a product. With sufficient perceptual training, designers can learn to include the auditory properties of products in their thinking, and include sound in their imagery when creating product concepts (similar to imagery with visual product properties). The findings provide designers with insights into a conceptual network of product sounds and their acoustical structure. This conceptual network can serve as a starting point for sound related technical and conceptual communication. Overall, we hope to have increased awareness and sensitivity to the auditory properties of products.

The knowledge derived from our study can be used by (sound) designers at several points in the design process. First, designers can have an overview of what kind of sounds to expect from products (i.e., product sound categories). By assigning a category to a product sound, they will also have quick information on the acoustical structure of a sound and its psychoacoustical and conceptual correlates. The conceptual network that this study identified does not signify an exact semantic correspondence of concepts. For example, if air sounds are location oriented or alarm sounds evoke abstract meanings, it is a designer’s task to specify a location (e.g., bathroom, kitchen) or an abstract meaning (e.g., danger, friendly warning).

Furthermore, designers can use the categories as a guide in order to avoid shifting away from intended concepts. That is, acoustically it is possible to change the sound of a product; however, changes in the acoustical content of the sound will have direct consequences on activated conceptual associations and corresponding semantic associations. For example, if the roughness component in a mechanical sound is eliminated in order to make it more pleasant, the resulting sound may resemble an air or a cyclic sound and can therefore (unintentionally) activate semantic associations appropriate for air or cyclic sounds.

Emotional responses are also part of the sound activated conceptual network. However, it is not yet clear what causes emotional responses: whether they are caused by early sensory processes (e.g., psychoacoustical dispositions of the sound) or cognitive processes (e.g., certain concepts). As emotions are an important component of user experiences with products (Desmet, 2002), sound designers should pay attention to the real cause of the emotion. Furthermore, designers very often aim to make a product context relevant. A product should fit the locations and situations in which it is used. Sound as an integral part of a product is also part of the context of use. Its effect on the environment should be considered and its fittingness ensured. Consequently, emotional responses to product sounds could also be facilitated by the contextual information surrounding the sounds (Özcan, 2014).

One final remark is that we have presented the relationship between a sound and its source as a product. Therefore, designers should try to ensure the suitability of the sound to the product, as previously suggested by Blauert and Jekosch (1997). For example, a shaver should first sound like a shaver; but if the visual aspects of the product indicate a desirable experience (e.g., elegance), designers can try to manipulate the sound towards elegance within the limits of the perceptual boundaries for a shaver sound. The methods presented in the paper for estimating perceptual similarity could be useful to define these boundaries and to collect the associations people have with manipulated sounds. Thus, there is space for a designer to alter the sounds within the category borders that are established in this study. However, the category borders of air and cyclic sounds seem to be vaguer than other category borders. Therefore, ambiguities in sound identification can occur for such sounds. It is critical that the result of the sound identification should at least correspond to the expectations evoked by the product identity.

Future Studies

We have presented an overview of the conceptual associations that product sounds may have. In this paper, sound source has emerged as one of the concepts listeners use to identify a sound, but this has not been thoroughly investigated. A more specific study on how well product sounds are (lexically) identified is still needed. We have also argued that the descriptions of product sounds that have been produced are a result of different stages in an identification process. Future studies may investigate more systematically the relationship between semantic associations and the stages of the identification process.

Considering that both auditory and source-related visual information constitute the conceptual network of product sounds, it seems plausible that audio-visual interactions occur during sound identification. However, to what extent visual information has an additive, complementary and/or inhibitory influence on product sound identification needs to be investigated further.

Acknowledgments

We gratefully acknowledge the help of Joline Boschman, Linda Rindertsma, Tim Pellikaan, and Rob Langendijk for running the experiments. We also wish to thank Alexandre Vieira for voluntarily programming the experimental software during his free time.

Endnotes

- 1. A product can be any man-made object that serves as an extension of the human body. In this paper, we regard products as domestic appliances.

- 2. Praat is a free software program for the acoustical analysis of phonetics. Paul Boersma and David Weenink implemented the program (see, www.praat.org).

- 3. Psysound is a psychoacoustical analysis program. For reliable measuring, it was calibrated by the SPLs of each sound for the analysis of the psycho-acoustical parameters. (see, http://farben.latrobe.edu.au/mikropol/volume5/cabrerad/PsySound.html).

References

- Aldrich, K., Hellier, E., & Edworthy, J. (2009). What determines auditory similarity? The effect of stimulus group and methodology. Quarterly Journal of Experimental Psychology, 62(1), 63-83.

- Aures, W. (1985). Berechnungsverfahren für den sensorischen Wohlklang beliebiger Schallsignale. [A model for calculating the sensory euphony of various sounds]. Acta Acustica united with Acustica, 59(2), 130-141.

- Ballas, J. A. (1993). Common factors in the identification of an assortment of brief everyday sounds. Journal of Experimental Psychology: Human Perception and Performance, 19(2), 250-267.

- Benko, U., Petrovcic, J., Juricic, D., Tavcar, J., Rejec, J., & Stefanovska, A. (2004). Fault diagnosis of a vacuum cleaner motor by means of sound analysis. Journal of Sound and Vibration, 276(3-5), 781-806.

- Bergman, P., Sköld, A., Västfjäll, D., & Fransson, N. (2009). Perceptual and emotional categorization of sound. Journal of the Acoustical Society of America, 126(6) 3156-3167.

- Bisping, R. (1997). Car interior sound quality: Experimental analysis by synthesis. Acta Acustica united with Acustica, 83(5), 813-818.

- Björk, E. A. (1985). The perceived quality of natural sounds. Acta Acustica united with Acustica, 57(3), 185-188.

- Blauert, J., & Jekosch, U. (1997). Sound-quality evaluation: A multi-layered problem. Acta Acustica united with Acustica, 83(5), 747-753.

- Bonebright, T. L. (2001). Perceptual structure of everyday sounds: A multidimensional scaling approach. In Proceedings of the International Conference on Auditory Display (pp. 73-78). Espoo, Finland: ICAD.

- Bonebright, T. L., & Flowers, J. H. (2011). Evaluation of auditory display. In T. Hermann, A. Hunt, & J. G. Neuhoff (Eds.), The sonification handbook (pp. 111-144). Berlin, Germany: Logos Publishing House.

- Chouard, N., & Hempel, T. (1999). A semantic differential design especially developed for the evaluation of car interior sounds. Journal of the Acoustical Society of America, 105(2), 1280.

- Cleary, A. M. (2002). Recognition with and without identification: Dissociative effects of meaningful encoding. Memory & Cognition, 30(5), 758-767.

- Crilly, N., Moultrie, J., & Clarkson, P. J. (2004). Seeing things: Consumer response to the visual domain in product design. Design Studies, 25(6), 547-577.

- Cummings, A., Ceponiene, R., Koyama, A., Saygin, A. P., Townsend, J., & Dick, F. (2006). Auditory semantic networks for words and natural sounds. Brain Research, 1115(1), 92-107.

- Desmet, P. M. A. (2002). Designing emotions. Doctoral dissertation, Delft University of Technology, Delft, the Netherlands.

- Desmet, P. M. A., & Hekkert, P. (2007). Framework of product experience. International Journal of Design, 1(1), 57-66.

- Dubois, D. (2000). Categories as acts of meaning: The case of categories in olfaction and audition. Cognitive Science Quarterly, 1, 35-68.

- Dubois, D., Guastavino, C., & Raimbault, M. (2006). A cognitive approach to soundscape: Using verbal data to access everyday life auditory categories. Acta Acustica united with Acustica, 92(6), 865-874.

- Edworthy, J., Hellier, E., & Hards, R. (1995). The semantic associations of acoustic parameter commonly used in the design of auditory information and warning signals. Ergonomics, 38(11), 2341-2361.

- Eme, P. E., & Marquer, J. (1998). Quantitative and qualitative individual differences in visual comparison processing: Are strategies only analytic or holistic? Personality and Individual Differences, 25(3), 459-475.

- Fabiani, M., Kazmerski, V. A., Cycowicz, Y. M., & Friedman, D. (1996). Naming norms for brief environmental sounds: Effects of age and dementia. Psychophysiology, 33(4), 462-475.

- Franinović, K., & Serafin, S. (2013). Sonic interaction design. Cambridge, MA: MIT Press.

- Frauenberger C., & Stockman T. (2009). Auditory display design: An investigation of a design pattern approach. International Journal of Human-Computer Studies, 67(11), 907-922.

- Gaver, W. W. (1993a). What in the world do we hear? An ecological approach to auditory event perception. Ecological Psychology, 5(1), 1-29.

- Gaver, W. W. (1993b). How do we hear in the world? Explorations in ecological acoustics. Ecological Psychology, 5(4), 285-313.

- Giordano, B. L., Susini, P., & Bresin, R. (2013). Perceptual evaluation of sound-producing objects. In K. Franinović & S. Serafin (Eds.), Sonic interaction design (pp. 151-197). Cambridge, MA: MIT Press.

- Goldschmidt, G., & Sever A. L. (2011). Inspiring design ideas with texts. Design Studies, 32(2), 139-155.

- Goldstone, R. L. (1994). The role of similarity in categorization: Providing a groundwork. Cognition, 52(2), 125-157.

- Guastavino, C. (2007). Categorization of environmental sounds. Canadian Journal of Experimental Psychology, 60(1), 54-63.

- Gygi, B., Kidd, G. R., & Watson, C. S. (2004). Spectral-temporal factors in the identification of environmental sounds. Journal of the Acoustical Society of America, 115(3), 1252-1265.

- Gygi, B., Kidd, G. R., & Watson, C. S. (2007). Similarity and categorization of environmental sounds. Perception & Psychophysics, 69(6), 839-855.

- Hekkert, P., Snelders, D., & van Wieringen, P. C. W. (2003). ‘Most advanced, yet acceptable’: Typicality and novelty as joint predictors of aesthetic preference. British Journal of Psychology, 94, 111-124.

- Hermann, T., Hunt, A., & Neuhoff, J. G. (2011). The sonification handbook. Berlin, Germany: Logos Publishing House.

- Isen, A. M., & Daubman, K. A. (1984). The influence of affect on categorization. Journal of Personality and Social Psychology, 47(6), 1206-1217.

- Kavakli, M. (2001). Sketching as mental imagery processing. Design Studies, 22(4), 347-364.

- Keiper, W. (1997). Sound quality evaluation in the product cycle. Acta Acustica united with Acustica, 83(5), 784-788.

- Kendall, R. A., & Carterette, E. C. (1993). Verbal attributes of simultaneous wind instrument timbres: I. von Bismarck’s adjectives. Music Perception, 10(4), 445-468.

- Kidd, G. R., & Watson, C. S. (2003). The perceptual dimensionality of environmental sounds. Noise Control Engineering Journal, 51(4), 216-231.

- Lakoff, G., & Johnson, M. (1980). Metaphors we live by. Chicago: The University of Chicago Press.

- Lemaitre G., Houix O., Visell Y., Franinovic K., Misdariis N., & Susini P. (2009). Toward the design and evaluation of continuous sound in tangible tnterfaces: The Spinotron. International Journal of Human-Computer Studiesn, 67(11), 976-993.

- Letens, U. (2000). Exemplarische Darstellung einer subjektiven Beurteilung von PKW-Fahrgeräuschen [Exemplary representation of a subjective assessment of car-driving noises]. Paper presented at the Fortschritte der Akustik DAGA 2000, Oldenburg, Germany.

- Lyon, R. H. (2000). Designing for product sound quality. New York, NY: Marcel Dekker.

- Marcell, M. E., Borella, D., Greene, M., Kerr, E., & Rogers, S. (2000). Confrontation naming of environmental sounds. Journal of Clinical and Experimental Neuropsychology, 22(6), 830-864.

- McCauley, C., Parmelee, C. M., Sperber, R. D., & Carr, T. H. (1980). Early extraction of meaning from pictures and its relation to conscious identification. Journal of Experimental Psychology: Human Perception and Performance, 6(2), 265-276.

- Medin, D. L., Lynch, E. B., & Solomon, K. O. (2000). Are there kinds of concepts? Annual Review of Psychology, 51, 121-147.

- Medin, D. L., & Barsalou, L. W. (1987). Categorization process and categorical perception. In S. Harnad (Ed.), Categorical perception: The groundwork of perception. New York, NY: Cambridge University Press.

- Melcher, J. M., & Schooler, J. W. (2004) Perceptual and conceptual training mediate the verbal overshadowing effect in an unfamiliar domain. Memory & Cognition, 32(4), 618-631.

- Mervis, C. B., & Rosch, L. (1981). Categorization of natural objects. Annual Review of Psychology, 32, 89-115.

- Muller, W. (2001). Order and meaning in design. Utrecht, The Netherlands: Lemma Publishers.

- Murphy, G. L. (1996). On metaphoric representation. Cognition, 60, 173-204.

- Murphy, G. L. (2002). The big book of concepts. Cambridge, MA: MIT Press.

- Niedenthal, P. M., Halberstadt, J. B., & Innes-Ker, A. H. (1999). Emotional response categorization. Psychological Review, 106(2), 337-361.

- Ortony, A., Clore, G. L., & Collins, A. (1988). The cognitive structure of emotions. Cambridge, UK: Cambridge University Press.

- Oxman, R. (2002). The thinking eye: Visual re-cognition in design emergence. Design Studies, 23(2), 135-164.

- Özcan, E., & van Egmond, R. (2009). The effect of visual context on the identification of ambiguous environmental sounds. Acta Psychologica, 131(2), 110-119.

- Özcan, E., & van Egmond, R. (2012). Basic semantics of product sounds. International Journal of Design, 6(2), 41-54.

- Özcan, E. (2014). The Harley effect: Internal and external factors that facilitate positive experiences with product sounds. Journal of Sonic Studies, 6(1), a07.

- Paivio, A. (1991). Dual coding theory: Retrospect and current status. Canadian Journal of Psychology - Revue Canadienne De Psychologie, 45(3), 255-287.

- Purcell, A. T., & Gero, J. S. (1998). Drawings and the design process. Design Studies, 19(4), 389-430.

- Porteous, D., & Mastin, J. F. (1985). Soundscape. Journal of Architectural Planning Research, 2(3), 169-186.

- Press, W. H., Teukolsky, S. A., Vetterling, W. T., & Flannery, B. P. (1995). Random Numbers. In W. H. Press, S. A. Teukolsky, W. T. Vetterling, & B. P. Flannery (Eds.), Numerical recipes in C: The art of scientific computing (pp. 274-316). Cambridge, UK: Cambridge Press.

- Rosch, E. H. (1978). Principles of categorization. In E. H. Rosch & B. B. Lloyd (Eds.), Cognition and categorization (pp. 27-48). Hillsdale, NJ: Lawrence Erlbaum Associates.

- Schifferstein, H. N. J., & Spence, C. (2008). Multisensory product experience. In H. N. J. Schifferstein & P. Hekkert (Eds.), Product experience (pp. 133-161). London, UK: Elsevier.

- Spence, C., & Zampini, M. (2006). Auditory contributions to multisensory product perception. Acta Acustica united with Acustica, 92, 1009-1025.

- Susini, P., McAdams, S., Winsberg, S., Perry, I., Viellard, S., & Rodet, X. (2004). Characterizing the sound quality of air-conditioning noise. Applied Acoustics, 65(8), 763-790.

- te Linde, J. (1983). Pictures and words in semantic decisions. In J. C. Yuille (Ed.), Imagery, memory and cognition: Essays in honor of Allen Paivio (pp. 117-129). Hillsdale, NJ: Lawrence Erlbaum Associates.

- Ulusoy, Z. (1999). To design versus to understand design: The role of graphic representations and verbal expression. Design Studies, 20(2), 123-130.

- van Egmond, R. (2004). Emotional experience of frequency modulated sounds: Implications for the design of alarm sounds. In D de Waard, K. A. Brookhuis, & C. M. Weikert (Eds.), Human factors in design (pp. 345-356). Maastricht, the Netherlands: Shaker Publishing.

- van Egmond, R. (2008). The experience of product sounds. In H. N. J. Schifferstein & P. Hekkert (Eds.), Product experience (pp. 69-89). Amsterdam, the Netherlands: Elsevier.

- van Egmond, R. , Desmet, P., & van der Helm, A. (2005). Basic and cognitive emotions in sound perception. In Proceedings of the 4th International Conference on Design and Emotion (pp. 1-11). Ankara, Turkey: Middle East Technical University.

- Vanderveer, N. J. (1979). Confusion errors in identification of environmental sounds. Journal of the Acoustical Society of America, 65(S1), S60-S60.

- Västfjäll, D., Kleiner, M., & Gärling, T. (2003). Affective reactions to interior aircraft sounds. Acta Acustica united with Acustica, 89(4), 693-701.

- von Bismarck, G. (1974). Timbre of steady sounds: A factorial investigation of its verbal attributes. Acta Acustica united with Acustica, 30(3), 146-159.

- Wiegers, T., Langeveld, L., & Vergeest, J. (2011). Shapelanguage: How people describe shapes and shape operations. Design Studies, 32(4), 333-347.

- Yost, W. A. (1991). Auditory image perception and analysis: The basis for hearing. Hearing Research, 56(1-2), 8-18.

- Zwicker, E., & Fastl, H. (1990). Psychoacoustics: Facts and models. Berlin, Germany: Springer.

Appendix

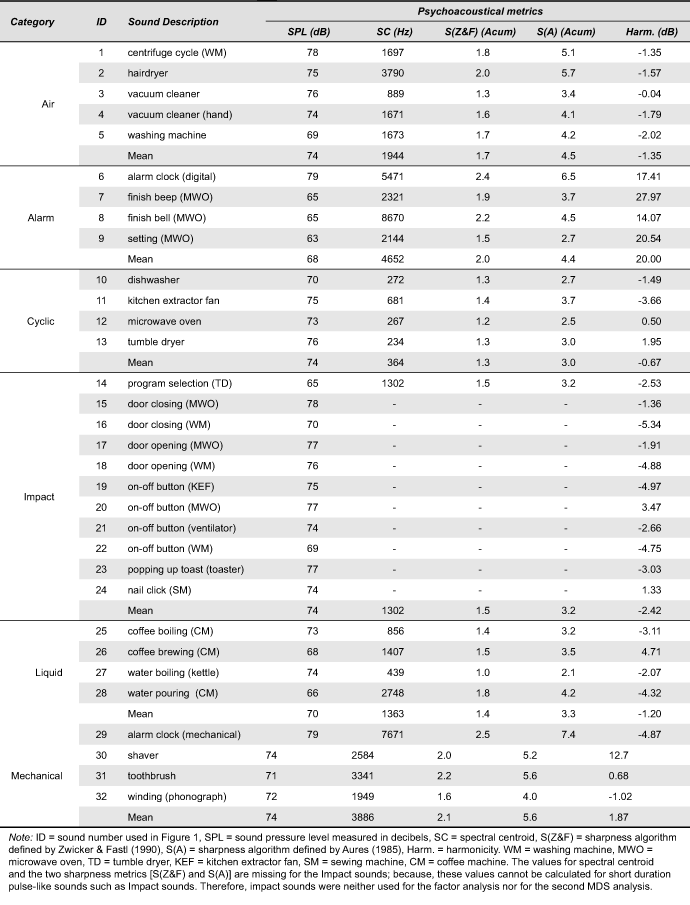

Table A1 presents the psycho-acoustical parameters for each recorded sound. The sound pressure level (SPL) of each sound was measured by a Bruel & Kjaer 2260 Investigator. The SPL level was used to calibrate the psycho-acoustical analysis software. Harmonicity was calculated using Praat2. Two sharpness parameters (Zwicker & Fastl, 1990; Aures, 1985), the spectral centroid, and 39 critical band levels in Erbs were calculated using Psysound3. To reduce the number of parameters a principle component analysis (PCA) with Varimax rotation was conducted that resulted in two factors explaining 78.94 % of the variance. High frequency critical bands (CB-1747 through CB-15085), the two sharpness parameters, the spectral centroid, and the SPL loaded high and positively on Factor 1 (explaining 44.57 % of the variance). Therefore, this factor was interpreted as a combination of sharpness and loudness. Low frequency critical bands (CB-55 through CB-1545) loaded high and positively on Factor 2, whereas Harmonicity loaded high and negatively on Factor 2 (explaining 34.37 of the variance). Therefore, Factor 2 was interpreted as a combination of noisiness and low frequencies. In addition, the regression weights for Factor 1 and Factor 2 were extracted for each sound.

Table A1. Psychoacoustical metrics calculated for each product sound and product sound category.